Sponsored By: Alts

This essay is brought to you by Alts, the best place for uncovering new and interesting alternative assets to invest in.

There are two types of AI threats. The first is fun to think about: In this threat, a super-potent digital intelligence escapes the intended bounds of its mandate and then wipes out humanity in a robot-overlord, glowing-red-eyed-Arnold-Schwarzenegger doomsday scenario. On second thought, it is not fun to think about per se, but it’s at least a more interesting thought exercise than the second threat.

The second threat is an AI that goes right up to the edge of Skynet, but falls short of being an artificial general intelligence. Instead, AI becomes a new type of ultra-powerful software that has the potential to upend existing market dynamics. Said simply, the second threat is one where an ultra-small set of people make lots of money. The interesting thing about this scenario is contemplating who wins and who loses. Will today’s tech goliaths be able to leverage their gigantic balance sheets to extend their reign? Or will the future be seized by a new generation of little guys with hyper-intelligent slingshots? The question of which companies win the AI battle is one that needs answering.

Before making this piece, I found myself waiting for someone to publish a map of the AI value chain, but no one was doing it to my satisfaction. This article is my attempt to make that map. Rather than follow the traditional version of a market map where I list every company ad nauseam and attempt to impress you with sheer volume, I will instead discuss archetypes of organizations and then forecast scenarios where these archetypes will end up. Less comprehensive, more intellectually potent (hopefully).

The Rules Of Winning

To start, no one actually agrees on what AI even is! Search on Investopedia or Google and you’ll get some definitions like “when a machine can mimic the intelligence of a human.” I would love this definition if I happened to love entirely useless sentences. These words are so shifty, so dependent on context, that even the word AI feels futile. So much truth gets lost in the process of translating the Google definition (replete with lofty hand-waving) to pragmatic business use cases. Part of the definitional difficulty comes from AI’s very nature.

During the last AI boom in 2016, Andrew Ng used to say that “AI was like electricity” because it was a fundamental layer of power that will transform every aspect of an industry. This means that AI is resistant to an old-school value chain exercise where you would map out raw inputs, suppliers, manufacturers, and distributors. Because most of an AI product resides within digital goods, the boundaries between capabilities and firms are very blurry.

All of these activities are done in the creation or deployment of an AI model. The AI model is the fancy math that helps a machine mimic what a human can do. My personal (working) definition of AI is a machine that can do something beyond rote commands. It is the ability of a machine to interpret general instructions and/or ambiguous inputs into specific outputs. Again, this definition is ugly and I’ll update it over time, but it is where I’ve landed at the time of publishing this article.

Note: This difficulty with definitions and descriptions is partially because the field is changing so quickly. Every week I see a new demo that challenges my assumptions about AI progress. In my typical coverage area of finance, everyone agrees on the fundamentals and deeply disagrees about the details. AI is a field where everyone disagrees on the fundamentals, the details, and just about everything in between.

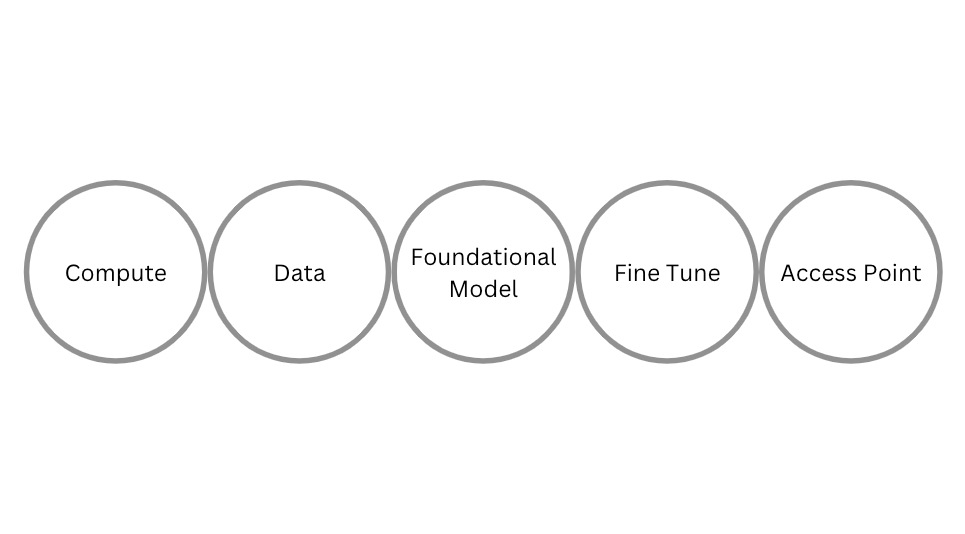

A company selling or utilizing AI will have some of the 5 following activities. In some cases, they will have all 5, in some cases just 1, but all of them will still usually be called an “AI company.” I’ll talk about examples here in a sec, but understanding the framing is important.

- Compute: The chips or server infrastructure required to run AI models

- Data: A data set that a model is trained on

- Foundational Model: The compute and data will be combined with some sort of fancy math into some sort of broadly applicable use case

- Fine Tune: The big foundational model, if not sufficient for a specific use case, will then be tuned for a specific scenario

- End User Access Point: The model will then be deployed in some sort of application

It is tempting to think of this as a traditional value chain.

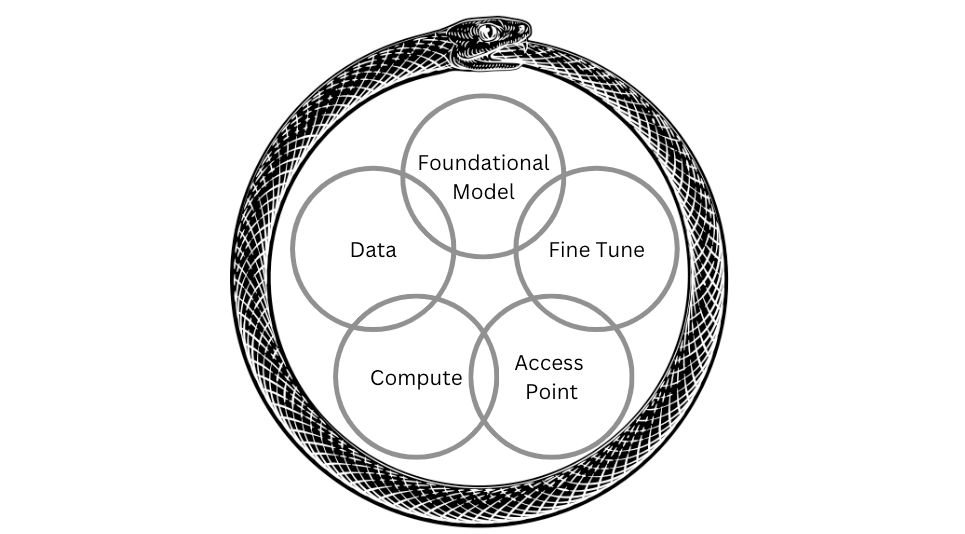

However, this isn’t quite right either. All of these overlap and intersect in a way even more interconnected than traditional software applications. Think again of the electricity example, AI is a power (in that it runs through an entire organization) and it is a product (in that it has its own ecosystem of support). Other pieces of software have this distinction, but AI blurs it all together in a way that makes your brain melt. It holds an eerie similarity to the early days of actual power when Edison first introduced commercial lighting and simultaneously ran his own power plant. Was the electricity the product or the lighting? Simple answer, it was both at the same time. Because AI is mostly a digital good, it is even more intertwined than that.

The Only Subscription

You Need to

Stay at the

Edge of AI

The essential toolkit for those shaping the future

"This might be the best value you

can get from an AI subscription."

- Jay S.

Join 100,000+ leaders, builders, and innovators

Email address

Already have an account? Sign in

What is included in a subscription?

Daily insights from AI pioneers + early access to powerful AI tools

Comments

Don't have an account? Sign up!