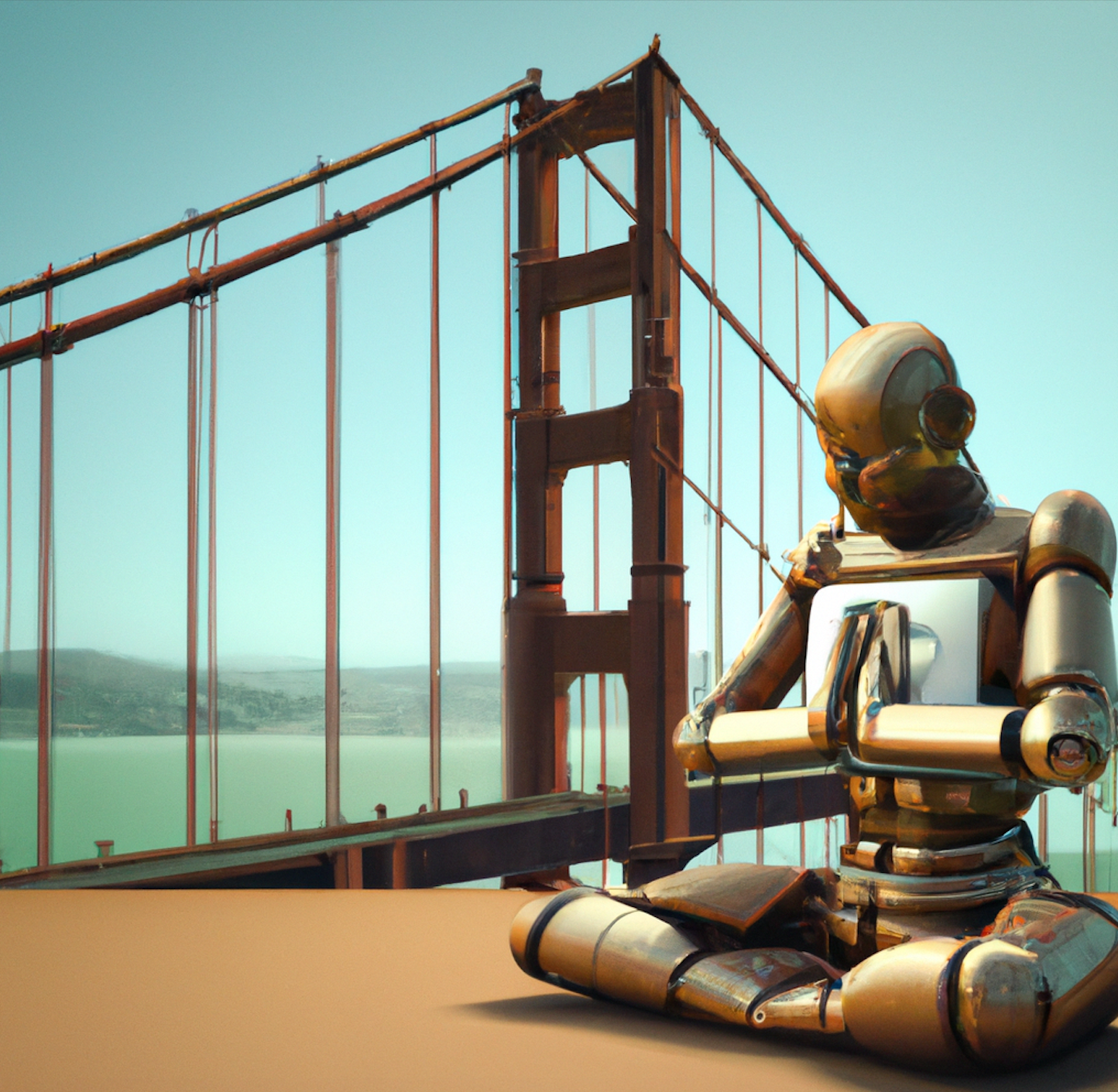

Meditations on AI Mecca

A dispatch from a hacker conference in San Francisco

December 21, 2022 Updated January 25, 2026

Flying into San Francisco is an exercise in remembering. There are still the same ads for software you’ve never heard of. There are still green juice bars selling shot glasses of bitter slime for $9. There are still aggressive amounts of athleisure. There are still the 10% too loud overheard conference calls, where entrepreneurs pitch their vision of the future. There are still the drab office buildings hiding the technology magic happening within. There are still venture capitalists roaming around, their fangs dripping with management fees and the occasional third spouse who used to be their personal assistant. There is still that unique combination of techno-optimism and pragmatic capitalism that says technology will bring about utopia while simultaneously producing judicious cash flows.

San Francisco still is, as it has been for the last 20 years, a potent cocktail of hope and hedonism, hackers and haters, visionaries and vampires. It is a place where the future is made.

Now, the Bay Area is riled with AI fervor. The long-awaited arrival of our robot overlords has potentially come with the explosive invention of AI tools that can easily generate text and images. Previous tech waves have been fiscally lucrative but did less to advance humanity than hoped. Crypto has been a bust, B2B software was and is and always will be boring (while useful), but AI could be the technological revolution that has been promised to us since the smartphone era.

AI is so seductive because using these recent advances feels like magic. Five years ago, it would have taken a team of engineers a few years and millions of dollars to build a fairly primitive AI product. Now, with advancements made by firms like OpenAI, anyone with a middling understanding of code can use cutting-edge AI. Thought leaders have been disseminating this AI opportunity narrative through newsletter and blog post I.V. drip lines, and all of their pontifications argue the same thing: AI is for real this time.

These writers—myself included—have helped drive the excitement of engineers to a fever pitch. Every tinkerer I know is building an AI product right now, and even the roughest of demos are breathtaking. I gasp as I scroll Twitter, overwhelmed by new products I didn’t even know were possible, all being built as side projects. Here at Every, we’ve utilized this new tech to build a Google Docs competitor and a chatbot that helps you find information from one of our favorite podcasts. We’re just a small media publication able to build products with capabilities that would’ve been impossible six months ago. When you contemplate the entirety of the technology industry doing this sort of hacking, the possibilities become mind-blowing.

To observe this phenomenon, I returned to San Francisco, my home of many years, to attend an AI hackathon organized by HF0, a residency program for top technical founders. I wanted to watch AI techno-awe in person, and (full disclosure) they gave me free meals and a place to crash.

What is AI?

Over the week, I asked more than 30 people one question: what is AI?

Surprisingly, no one actually agreed on an answer. I compiled a list of some of the definitions that I heard:

- A nice man wearing three items of clothing with three different startup logos on them told me that AI is math that dumb people can’t understand.

- A person with neon-bright blonde hair told me that AI is math no one understands.

- A former Google engineer told me AI is software that makes you “shit your pants in fear.”

- A man with a slick goatee told me that AI was a god we think we can tame (I rather liked this one).

- A woman with a laptop covered in a variety of stickers told me that AI was a product that required more than 50% of your staff being PhDs to build.

This motley assortment of answers is fascinating because for many years we had a fairly standard benchmark on what AI was. We used the Turing test, which tested intelligence on natural language processing comprehension. It is arguable that we are nearly at or have already surpassed this point. Turing defined intelligence as "The extent to which we regard something as behaving in an intelligent manner is determined as much by our own state of mind as by properties of the object under consideration. If we are able to explain and predict its behavior we have little temptation to imagine intelligence. With the same object, therefore, it is possible that one man would consider it intelligent and another would not; the second man would have found out the rules of its behavior."

The Only Subscription

You Need to

Stay at the

Edge of AI

The essential toolkit for those shaping the future

"This might be the best value you

can get from an AI subscription."

- Jay S.

Join 100,000+ leaders, builders, and innovators

Email address

Already have an account? Sign in

What is included in a subscription?

Daily insights from AI pioneers + early access to powerful AI tools

Comments

Don't have an account? Sign up!