CHATBOT COURSE EARLY BIRD PRICING ENDING SOON!

We are thrilled to announce we are relaunching our How to Build a Chatbot course! If you are interested in learning how to build with AI, join our upcoming Fall cohort taught by our very own Dan Shipper.

In the course you'll learn:

- How to aggregate sources of data for your chatbot

- How to manage your vector databases

- How to build a UI and bring your chatbot into production

- How to use Langchain and LlamaIndex to build a chatbot that can access private data, and use tools

- and so much more!

Learn how to build your own chatbot in less than 30 days. It will run once a week for five weeks starting September 5th and early bird pricing is available for $1,300 along with an Every membership. Last time we ran the course it sold out pretty quickly, so if you are interested, grab your seat now while early bird pricing still applies.

Early-bird pricing ends on July 31st, after that the price will be $2000.

Over the last 6 months I’ve been obsessively tinkering with, writing about, and investing in AI. It’s been a ride. I’ve talked to some of the most interesting thinkers in the space, I’ve stayed up late at night hacking zillions of little experiments, I’ve panicked about AI doom, I’ve imagined never organizing anything again, and I’ve basked in the warm glow of curiosity and delight I get from using these models.

Working in AI during this period has felt like having a SpaceX rocket strapped to my butt. I think everyone feels this way. You go very fast, but you constantly feel behind. Every once in a while your brain explodes with the possibilities in front of you. It’s easy to end up carried away with all caps tweets about how THE WORLD HAS CHANGED.

Today, I’d like to write something a little more nuanced and reflective. Even if you’ve got a rocket strapped to your butt, it’s important to look down every once in a while, and take stock of where you are. As such, here’s a short list of things I believe about AI that are shaping how I’m approaching my work at Every and beyond.

Knowledge orchestration is the most important bottleneck for AI applications

There are two important components to intelligence: reasoning and knowledge. GPT-4 is quite good at reasoning, but its knowledge of the world is limited. As such, its performance is bottlenecked by our ability to give it the right knowledge at the right time for it to reason with.

Unlock the power of AI and learn to create your personal AI chatbot in just 30 days with our cohort-based course. No advanced programming skills are required, just a desire to learn.

Here's what you'll learn:

- Master AI fundamentals like GPT-4, ChatGPT, vector databases, and LLM libraries

- Learn to build, code, and ship a versatile AI chatbot

- Enhance your writing, decision-making, and ideation with your AI assistant

What's included:

- Weekly live sessions and expert mentorship

- Access to our thriving AI community

- Hands-on projects and in-depth lessons

- Live Q&A sessions with industry experts

- A step-by-step roadmap to launch your AI assistant

Early bird pricing ends on July 31st. Sign up now to take advantage. Learn to build in AI—with AI in just 30 days!

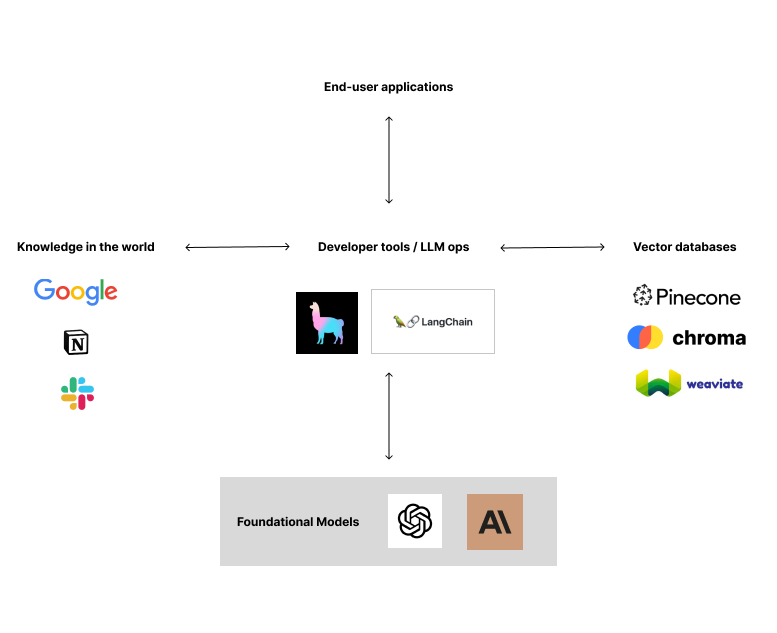

This problem, which I’m calling knowledge orchestration, is the biggest unsolved problem for builders in AI outside of progress on foundational models. It touches how you store, index, and retrieve the knowledge you need to perform useful large language model tasks. There are many people trying to improve this at different layers of the stack:

OpenAI and other players are working to build this at the foundational model layer. They’re building bigger context window sizes: the more knowledge you can fit into your prompt, the better. GPT-4’s 32,000 token context window is 8x better than previous models, so improvement is happening quickly.

One layer up from that, LlamaIndex and Langchain are building at the developer tool / infrastructure layer. They’re making it easy for developers to chunk, store, and retrieve knowledge from various different kinds of databases with only a few lines of code.

Vector database providers are also working on this problem. Pinecone, Weaviate, and Chroma are all battling for supremacy here—with Pinecone in the lead.

Finally, various all-in-one solutions like Metal and Baseplate are bundling all of these layers of the stack together to make it easy for developers to get started. They’ve got slick web interfaces that make data observable, and easy for developers to get started quickly.

I suspect all of these players will start to leak out of their current layer of the stack, and try to grow into other areas. The right solution will figure out how to integrate layers in the right way to make it easy for builders to get started and to make iteration speeds faster.

Knowledge orchestration is the process around knowledge. But what about the knowledge itself? What kind of knowledge is most valuable?

Here’s one type that excites me: end-to-end interaction data about the lifecycle of any process. A process could be something that a company does itself (like creating a D2C product) or it could be something that a company enables its customers to do (like editing photos).

End-to-end interaction means you can see the process at the beginning, you can see all of the iterations and editing steps in the middle, and then you can see and measure the results that this process generates at the end.

Being able to see the iteration steps in the middle and the final results at the end give you key data about what humans prefer, and also how they get to their preferred solutions. The more of this you can see, the better you’ll be able to steer models with techniques like reinforcement learning through human feedback (RLHF), fine tuning, and prompting to recreate these processes automatically—and, crucially, to make them better over time.

This dynamic leads to my next thesis: startups that are integrated over a process will be dominant.

Startups that are horizontally integrated over a process will be dominant

The more of a process you can see and store in your database, the more you can improve it. Therefore, there’s a significant incentive for startups to horizontally integrate and bundle to achieve better performance in an AI-first world. This means replacing things that their customers are paying other companies for with their own solution—so they can see more of the process and integrate it together.

I first came to this idea through a conversation with Replit founder, Amjad Masad. (Interview coming soon!) Replit is a prime example of being integrated over a process their customers do. They build a developer platform that allows customers to write and deploy apps all from a browser window. So in their case the process they’re integrating over is turning ideas into software.

I’ve seen similar sentiment about the importance of end-to-end process integration in other places as well. At a Sequoia event I attended a few weeks ago, Sam Altman mentioned that one of the main reasons OpenAI built ChatGPT was so that they could get human feedback from end users back into their models. In other words, they started out as an API-only, but realized that the best way to improve performance was to integrate forward over more layers of the value chain so that they could get direct access to their customers' data.

Backwards integration through earlier parts of the value chain can also be useful. The more you have access to the editing process that led to the final output of a process, the better you’ll be able to learn to recreate or improve that process:

What does this imply?

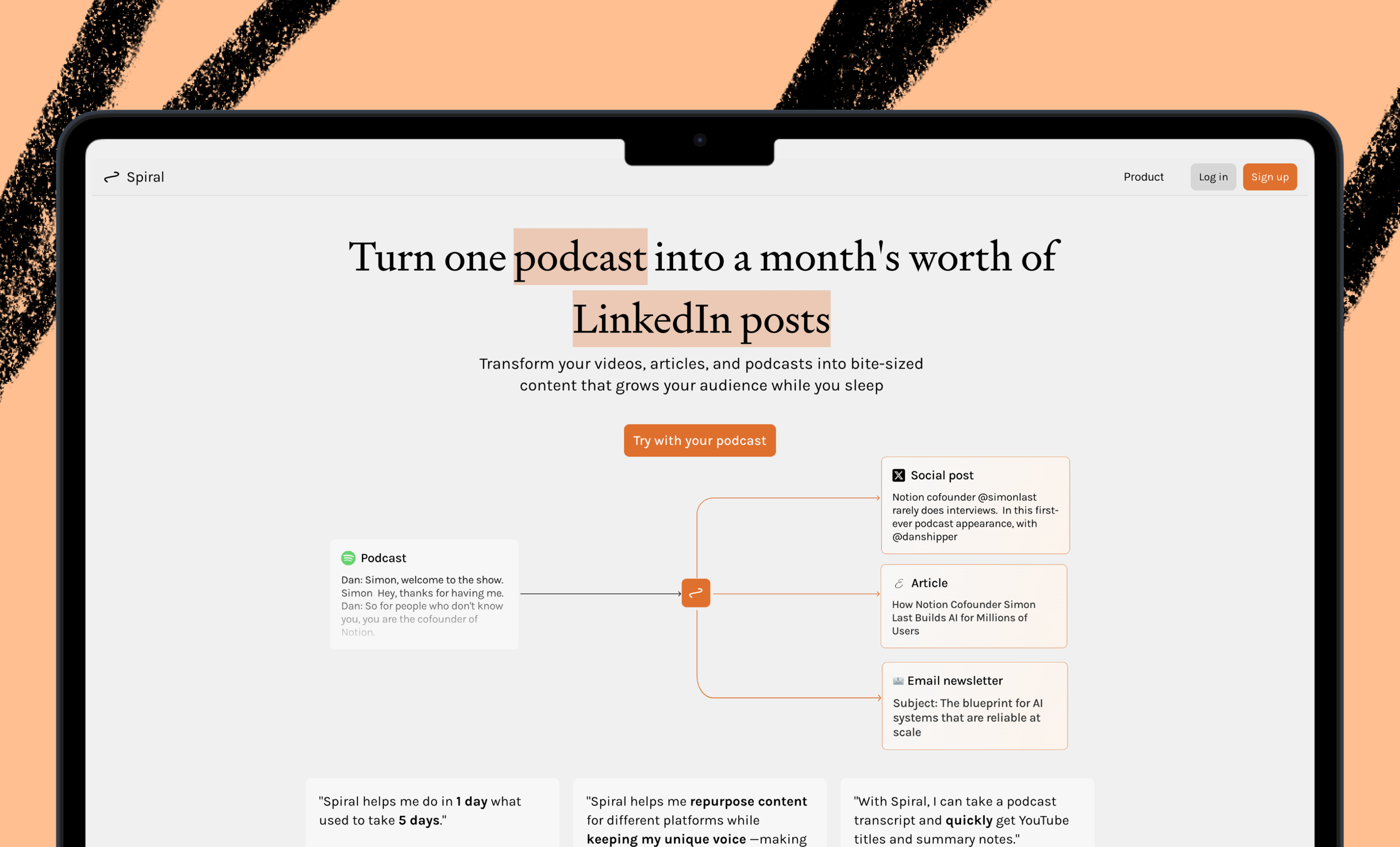

Startups should integrate over more of the process their customers perform

Today, Midjourney has no idea what you do with an image after it’s generated. You could imagine a version of Midjourney that generates an initial image, includes a full suite of Photoshop-like image editing capabilities, and then allows you to post the resulting image to social media and measure its performance. Startups like Playground are already doing this, and I expect this design pattern to be more prevalent over time. Adobe has done the same with their Firefly models.

Companies that already integrate over an entire process will start to automate with good results

Today, browsers see many of the end-to-end processes their customers do. If you’re a salesperson that researches prospects on LinkedIn, takes meetings in Google Meets, takes notes in Roam, and then records the resulting sale in Salesforce—your browser is going to see that. This gives browsers (and operating systems) the unique ability to automate and improve these kinds of processes for you. They’ll start with reducing manual data entry, but move into things like suggesting new leads so you don’t even need to browse through LinkedIn.

You can see this starting to happen already with the work that Adept is doing to automate processes on your computer. The potential value of this type of interaction is also behind the popularity of agent paradigms like AutoGPT.

This world is good for incumbents, but better for startups

You could argue that companies that are in the best position to collect this kind of data are incumbents. After all, Google has everything from your emails, to your documents, to your code, to your web analytics. But there will be significant privacy concerns for incumbents who try to share data between apps in their ecosystems, and there will also be significant internal political resistance to doing so. In short, incumbents are not organizationally architected for this world.

Startups that are architected with this intention from the beginning, who know it is a priority to both own an entire end-to-end process, and centrally store the data from that process so it can be used to make models better, will have an advantage. I think they’ll also have more leeway on security and privacy concerns to begin with, because they’ll be much smaller scale.

Of course, this kind of integration over a process is time consuming and expensive. Startups can’t afford to build everything at once. But I think that startups that begin with a vision to eventually control a process in this way eventually are a good bet.

AI will make progress where science has struggled

Science is about creating simple, causal explanations of the world so that we can make good predictions. But science has had a hard time in certain parts of the world where simple, causal explanations seem to elude us: psychology, social sciences, economics, and more. Despite centuries of research, and little consistent progress we keep looking for scientific explanations in these areas because we have no good alternative.

AI changes this equation. It allows us to make predictions about parts of the world that we can’t yet explain with science. AI can screen for cancer now. It can also predict things like who is likely to be diagnosed with anxiety or depression, or, in theory, which interventions or practitioners are most likely to heal it. It can do this without needing any definitive, universal scientific explanation for what anxiety is and how it arises—which decades of research has failed to find.

Interestingly, if AI can learn to be a good predictor of something like anxiety then at some level it has encoded at least part of the explanation for anxiety inside of its net. Neural networks are hard to examine, but they are easier to examine than brains—so we might be able to find good, simple scientific explanations for hard-to-study phenomena by first learning to predict them with AI.

We might also find that the simplest possible explanation for a complex biopsychosocial phenomena like anxiety is actually close to the size and complexity of the neural network that can predict it. If we learn to interpret neural nets, and print out their decision-making processes we might find that the explanation for something like anxiety is the size of a novel, or a textbook, or ten put together.

Nature doesn’t guarantee that simple explanations exist—even though we, as humans, are by nature attracted to them. This could represent the full-circle journey of science. At first, it tossed out human intuition and human stories in favor of equations and experiments. In the end, it might be that intuition and storytelling were the best ways for our minds to predict, and explain things that are beyond the ability of our more limited rational minds to comprehend. That would be something, indeed.

Ideas and Apps to

Thrive in the AI Age

The essential toolkit for those shaping the future

"This might be the best value you

can get from an AI subscription."

- Jay S.

Join 100,000+ leaders, builders, and innovators

Email address

Already have an account? Sign in

What is included in a subscription?

Daily insights from AI pioneers + early access to powerful AI tools

Comments

Don't have an account? Sign up!

don't you mean "vertically integrate"?

this is super insightful!!!

@vidy I went back and forth between vertically and horizontally. the definition i'm working off of is "vertically integrate" means anything that a company would normally pay a supplier or vendor for, and "horizontally integrates" means anything that a company's customers would normally pay someone else for.

but i could be wrong!

@danshipper oh that makes a lot of sense!!

@vidy @danshipper There might be various interpretations but I also thought it should be vertical integration. The way that I think of it is that in software consumers are often the one stitching together vendors and suppliers. If you buy an iPhone, Apple must figure out how to get all the physical components into the phone but if you use Midjourney, you have to figure out how to touch up, crop, and publish the image.

“”” “horizontally integrates" means anything that a company's customers would normally pay someone else for.”””

I interpreted that as being alternatives to the company itself since the customer might pay someone else to solve the same problem e.g. Airbnb expanding to operating hotels would be horizontal.

Anyway, great piece as always! I think being able to build with AI, speak to it as a writer and business thinker reveals truly unique insights.

This is insightful article. Read on 4/25/2023.

"Startups that are horizontally integrated over a process will be dominant"

"In other words, they started out as an API-only, but realized that the best way to improve performance was to integrate forward over more layers of the value chain so that they could get direct access to their customers' data."

Memo to myself: https://share.glasp.co/kei/?p=TolNDPEmLMj1NH2uJ554

Arrived here for the "first part" required to the "AI ChatBot Workshop" and stayed for the cookies.

@tomas.flores.art welcome!!