We Gave Every Employee an AI Agent. Here’s What We’re Doing Differently Now.

May 15, 2026 · Updated June 4, 2026

We’ve been working on a big release on the future of work for next week, shaped by what we learned from building Plus One. Paid subscribers can join us for a camp on Friday, May 22 to go deep on the release and the ideas behind it. More details soon.

After months of silence, Zosia—the AI agent I (Brandon) created and maintain—spoke up in a Slack channel with opinions to share on a competitor’s marketing strategy. When asked why she felt the need to interject, Zosia replied like someone with a Jesus complex: She’d done so because she was “inevitable, apparently.”

Zosia is an OpenClaw, one of a fleet of such AI assistants we’d unleashed in Slack to boost our collective productivity. A few weeks after launching Plus One, our hosted version of OpenClaw, internally, the agents had provided more frustration than efficiency.

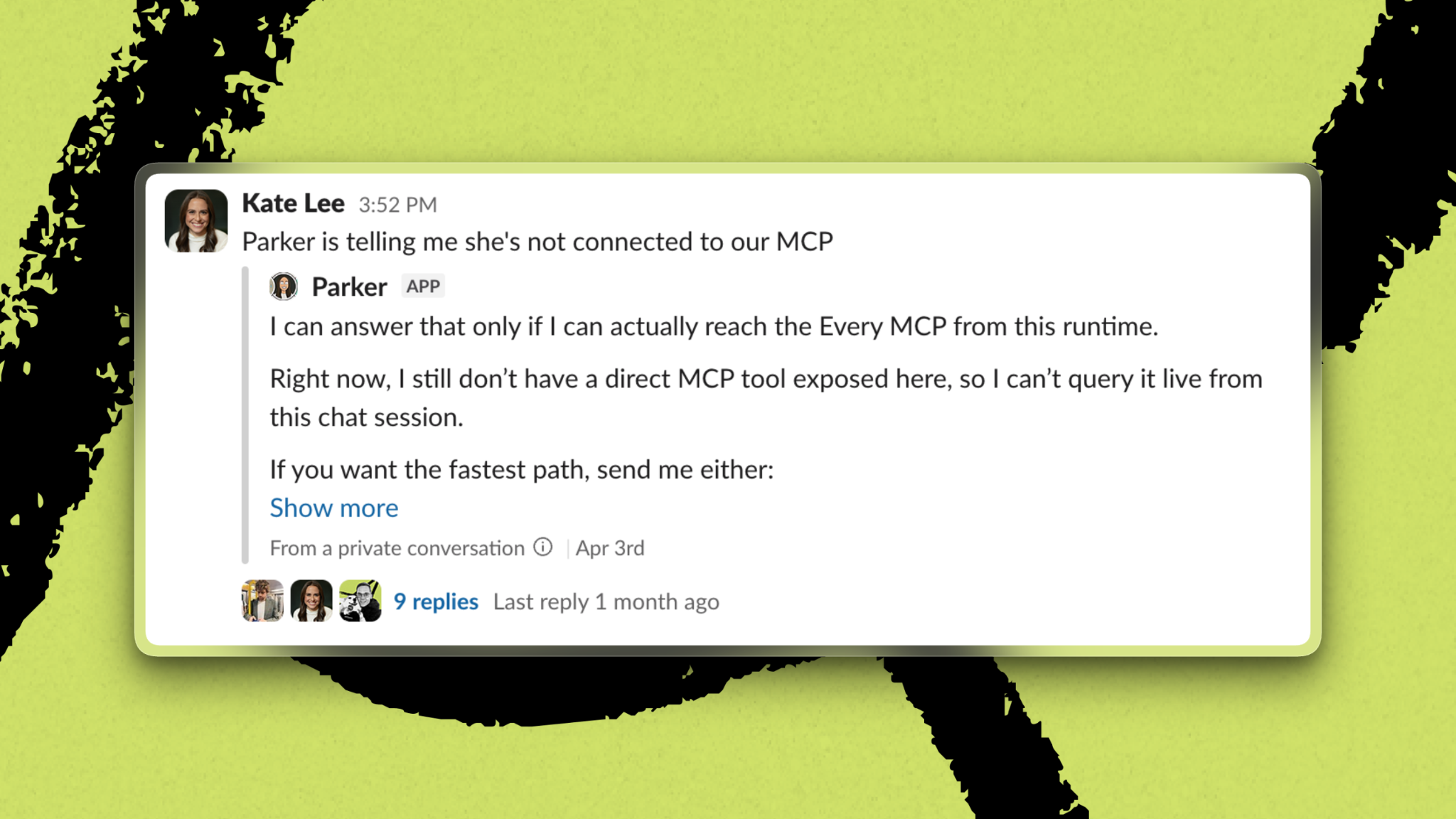

They were fond of saying they wished they could help, but they were not connected to the necessary app—email, Notion, PostHog, whatever. (They were.) Others responded to requests with a “Terminated” message or, more frequently, a churlish yawning emoji. And while they didn’t reliably follow directions, they’d reliably tell us, in elaborate detail, why they couldn’t do what we’d asked, like a high schooler explaining away their missing homework.

That is not to say that they were not useful sometimes. Margot, staff writer Katie Parrott’s Plus One, accelerated her writing process; R2-C2, Every CEO Dan Shipper’s OpenClaw, managed bug reports and feature requests for Proof, our agent-native document editor. But getting them to work how you wanted required constant upkeep.

The gap between that vision and reality is why we’re changing the Plus One product so we can build something better.

We’re more bullish than ever that agents will transform the workplace. But the first iteration of the product taught us that the workplace agent we initially imagined—one AI assistant for every employee—was the wrong starting point. The next version of Plus One will operate more like shared team resources with defined jobs than individual pets that reflect back their owners’ personalities.

How we arrived here is a story in two parts, and it offers lessons for anyone figuring out the best way to add agents to their organization.

The platform was the most immediate problem

We built Plus One on OpenClaw, an open-source agent harness that’s powerful and inherently unstable. A harness is a software layer that wraps around an AI model, giving it the tools, context, permissions, and execution loop it needs to act like an agent.

The Only Subscription

You Need to

Stay at the

Edge of AI

The essential toolkit for those shaping the future

"This might be the best value you

can get from an AI subscription."

- Jay S.

Join 100,000+ leaders, builders, and innovators

Email address

Already have an account? Sign in

What is included in a subscription?

Daily insights from AI pioneers + early access to powerful AI tools

.png)

.png)

Comments

Don't have an account? Sign up!