Hi all! Today we are trying something new. I’m calling it an “idea review.” The goal is to examine a book for its hypothesis and conclusions, and leave the examination of the book’s writing craft to more capable hands. Please leave any feedback in the comments below.

If you are new here this is Napkin Math, a data-driven newsletter with 17k+ readers that examines the fundamentals of technology and business. Today we are looking at the complexity that is Facebook and whether the company can be fixed.

What would you be willing to pay to stop your imminent death?

For most people, the answer is simple and brief. “Anything.” Money is trivial in comparison with experiencing one more sunrise, another kiss from your spouse, a great big bite of your favorite meal. It is clear that no number in a bank account is worth saving when compared to the things that actually matter.

Next, allow me to ask—how much are you willing to pay for a total stranger not to die? This answer is decidedly more complicated. My Mormon readers may cite their scriptures that “the worth of souls is great in the eye’s of God” and they would be willing to pay anything. My more actuarially inclined readers would cite the U.S. government’s official estimate of about $10M for a statistical life. The most honest of readers (atheist, religious, or otherwise) would say they wouldn’t spend more than two and a half grand to save a life, the average amount given to charity per household in America.

The great balancing act between what we spend to prevent harm is ever-present in our individual decisions. What we are willing to pay to fix problems is a question that each of us has to answer individually.

This complicated calculus is the core tension of Facebook. Facebook spent $3.7B in 2019 to rid the platform of misinformation that has life and death consequences, but many of us have the sense that the net impact of Facebook on society is still negative. Perhaps they need to spend more to save more lives. But how much?

It is a platform that undeniably does an incredible amount of good for its 2.89B users. Throughout COVID it allowed the planet to stay connected. During the Black Lives Matters protests last year, Facebook’s apps were all prominently used to share stories that built empathy for the cause. Facebook messaging apps are used to organize little girl’s birthday parties, dog adoptions, and a whole menagerie of the cutest little events you can think of. Even on the commercial side, Facebook’s targeted ad tools allow mom and pop businesses to compete (and win!) against the powerful ad teams at major corporations. The entire direct-to-consumer industry owes a large portion of its existence to the company. These examples for good could go on for dozens of pages.

For investors, it is also a net good! Facebook is one of the greatest money-making machines of all time. It recently hit a staggering one trillion dollar valuation, posted a $10.3B Net Income last quarter, and is one of the best-performing stocks of the last 10 years.

And yet.

The company has a cost.

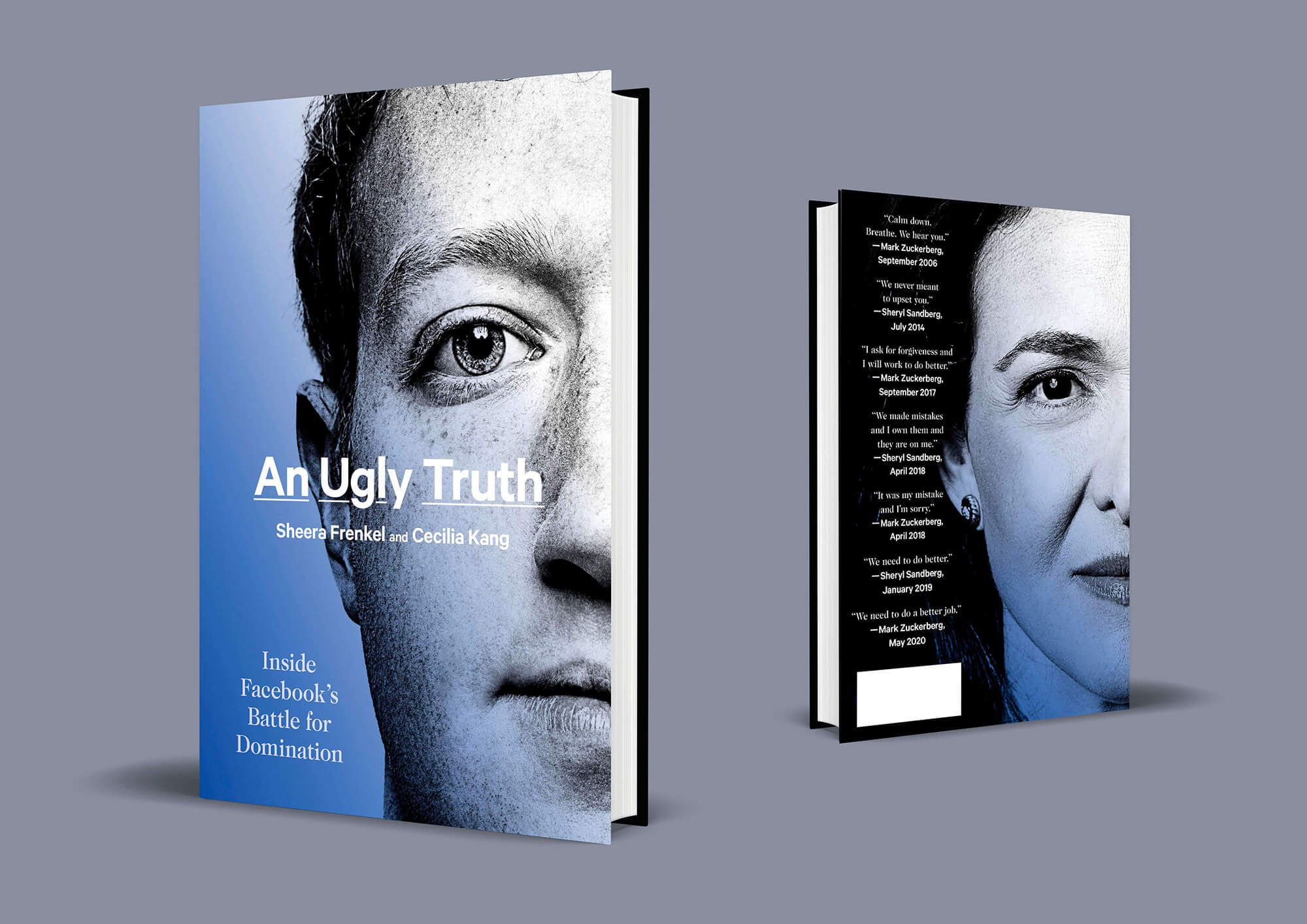

The great balancing act between good and harm on Facebook is the focus of the new book An Ugly Truth: Inside Facebook’s Battle for Domination. The two authors, both employees of the New York Times, conducted interviews with over 400+ sources to inform the book. They review the major scandals over the last 4 years, giving never before heard details on how the company behaved itself. It is a stunning achievement of reporting. The breadth of their access, coupled with them having sources at the very highest levels, allows them to paint a fairly damning picture of the company's actions related to some of the scandals referenced above.

For example, they revealed the level of failure that had taken place in Myanmar. 4 years before the genocide in 2017, multiple experts had meetings with Facebook warning of the rising racial tensions. The local populace, which so strongly associated the internet with Facebook that the words were used interchangeably, were hooked to the app. Much of what was being shared was recipes, photos of family, ya know—the cute stuff. It also included posts like, “One second, one minute, one hour feels like a world for people who are facing the danger of Muslim dogs.”

This content wasn’t being caught by the moderators. Shockingly, when the company expanded they only had 5 content moderators, who only spoke Burmese for a country of 54 million. There are over 100 languages spoken by the local populace and more dialects on top of that. To help make moderation more efficient, they translated their community guidelines into Burmese (they weren’t in the local language before then) and gave stickers users could place on content to help moderators find the bad stuff. The stickers didn’t quite work:

“The stickers were having an unintended consequence: Facebook’s algorithms counted them as one more way people were enjoying a post. Instead of diminishing the number of people who saw a piece of hate speech, the stickers had the opposite effect of making the posts more popular.”

The situation continued to escalate resulting in 25K deaths and 700K refugees fleeing the country. The company apologized and never faced any consequences for its role in this calamity.

But Myanmar wasn’t the only failure. Here’s a lowlight list from the last few years:

- The January 6th riots in Washington were partially driven by discussion that took place on Facebook (according to leaked company documents)

- Russian Interference in U.S. elections was initially denied, then slowly, painfully acknowledged.

- The company blocked Political Dissidents in Turkey at the request of its Authoritarian Government.

- Their automated ad system sold ads for illicit drugs and fake Coronavirus vaccines

- In one of many breaches, the company had 533 million users phone numbers stolen and put on the dark web.

- Facebook groups were used by human smugglers to convince desperate migrants to cross borders

To be clear—this isn’t an exhaustive list. There are many, many more of these stories.

The Only Subscription

You Need to

Stay at the

Edge of AI

The essential toolkit for those shaping the future

"This might be the best value you

can get from an AI subscription."

- Jay S.

Join 100,000+ leaders, builders, and innovators

Email address

Already have an account? Sign in

What is included in a subscription?

Daily insights from AI pioneers + early access to powerful AI tools

Comments

Don't have an account? Sign up!

Judging by the arguments you've highlighted, it seems to me authors of this book were looking for a fancy way to compel Facebook into further full-scale censorship of what people post.

You can't throw the baby out with the bathwater.

When you moderate people's posts, you're essentially taking their freedom of speech away. You're knowingly or unknowingly creating a centralised system where stories, opinions, and information that doesn't suit the narrative of those who pay the moderators won't see the light of day.

We've seen this already.

On one end are practicing doctors, sharing their experiences and recommendations for fighting what they consider regular symptoms 'declared as a pandemic.' And on the other hand are 'experts,' exalted scientists, and business men who think what they say should be taken hook, line, and sinker (without question) because they are whatever they claim to be.

Today, one group is censored. The other can say whatever they like, even when they are not necessarily doctors. Even if what they recommended lacks any atom of common sense, let alone evidence-based science.

All possible because of moderation.

But all of these only touch on the symptoms and not the root causes. If I were these authors, I'll worry more about the root causes of these.

Per my observation, here are just some of them.

The root cause is the death of personal responsibility - people can post or say what they like, accepting or rejecting what they say or post is your responsibility, not theirs.

It is the gradual death of individuality and conservatism in favor of group think - people have traded their dignity to identify with groups they have no idea of their underlying agenda.

It is because more than ever before, people are now outsourcing their common sense and their God-given thinking ability to 'experts' and 'celebrities' - just because someone with more money or followers says it or approves it doesn't mean it's right.

It is because we're happy to applaud grandiose exclamations without inquiring about the long-term costs.

I could go on and on.

As you rightly said, neither could churches nor the most religious institutions fix human beings behaviours.

So how these authors expect Facebook to do that? And to think they're subtly pushing for them to do this via more moderation is the equivalent of asking for something grandiose without examining the long-term costs.

Imagine there were moderators for this book. And those moderators were all under Facebook's payroll.

Do you think they would've approved its publication?

Questions!!!

Judging by the arguments you've highlighted, it seems to me authors of this book were looking for a fancy way to compel Facebook into further full-scale censorship of what people post.

You can't throw the baby out with the bathwater.

When you moderate people's posts, you're essentially taking their freedom of speech away. You're knowingly or unknowingly creating a centralised system where stories, opinions, and information that doesn't suit the narrative of those who pay the moderators won't see the light of day.

We've seen this already.

On one end are practicing doctors, sharing their experiences and recommendations for fighting what they consider regular symptoms 'declared as a pandemic.' And on the other hand are 'experts,' exalted scientists, and business men who think what they say should be taken hook, line, and sinker (without question) because they are whatever they claim to be.

Today, one group is censored. The other can say whatever they like, even when they are not necessarily doctors. Even if what they recommended lacks any atom of common sense, let alone evidence-based science.

All possible because of moderation.

But all of these only touch on the symptoms and not the root causes. If I were these authors, I'll worry more about the root causes of these.

Per my observation, here are just some of them.

The root cause is the death of personal responsibility - people can post or say what they like, accepting or rejecting what they say or post is your responsibility, not theirs.

It is the gradual death of individuality and conservatism in favor of group think - people have traded their dignity to identify with groups they have no idea of their underlying agenda.

It is because more than ever before, people are now outsourcing their common sense and their God-given thinking ability to 'experts' and 'celebrities' - just because someone with more money or followers says it or approves it doesn't mean it's right.

It is because we're happy to applaud grandiose exclamations without inquiring about the long-term costs.

I could go on and on.

As you rightly said, neither could churches nor the most religious institutions fix human beings behaviours.

So how these authors expect Facebook to do that? And to think they're subtly pushing for them to do this via more moderation is the equivalent of asking for something grandiose without examining the long-term costs.

Imagine there were moderators for this book. And those moderators were all under Facebook's payroll.

Do you think they would've approved its publication?

Questions!!!

Judging by the arguments you've highlighted, it seems to me authors of this book were looking for a fancy way to compel Facebook into further full-scale censorship of what people post.

You can't throw the baby out with the bathwater.

When you moderate people's posts, you're essentially taking their freedom of speech away. You're knowingly or unknowingly creating a centralised system where stories, opinions, and information that doesn't suit the narrative of those who pay the moderators won't see the light of day.

We've seen this already.

On one end are practicing doctors, sharing their experiences and recommendations for fighting what they consider regular symptoms 'declared as a pandemic.' And on the other hand are 'experts,' exalted scientists, and business men who think what they say should be taken hook, line, and sinker (without question) because they are whatever they claim to be.

Today, one group is censored. The other can say whatever they like, even when they are not necessarily doctors. Even if what they recommended lacks any atom of common sense, let alone evidence-based science.

All possible because of moderation.

But all of these only touch on the symptoms and not the root causes. If I were these authors, I'll worry more about the root causes of these.

Per my observation, here are just some of them.

The root cause is the death of personal responsibility - people can post or say what they like, accepting or rejecting what they say or post is your responsibility, not theirs.

It is the gradual death of individuality and conservatism in favor of group think - people have traded their dignity to identify with groups they have no idea of their underlying agenda.

It is because more than ever before, people are now outsourcing their common sense and their God-given thinking ability to 'experts' and 'celebrities' - just because someone with more money or followers says it or approves it doesn't mean it's right.

It is because we're happy to applaud grandiose exclamations without inquiring about the long-term costs.

I could go on and on.

As you rightly said, neither could churches nor the most religious institutions fix human beings behaviours.

So how these authors expect Facebook to do that? And to think they're subtly pushing for them to do this via more moderation is the equivalent of asking for something grandiose without examining the long-term costs.

Imagine there were moderators for this book. And those moderators were all under Facebook's payroll.

Do you think they would've approved its publication?

Questions!!!