Not every step in an AI workflow needs the smartest AI. That may sound obvious, but it’s not how most people are working. The default is to route entire tasks through frontier models, which is expensive, slow, and usually unnecessary. Incremental determinism starts from a different question: How much intelligence does this task really need?? The answer is almost always less than you’d expect, and the savings add up.—Mike Taylor

There is a reason McDonald’s would never ask its CEO to man the burger grill: It would cost the company $9,230.77 an hour. It’s the same as using frontier AI models to do every task—you don’t need to pay 75 cents every half hour ($1,095 per month!) for Claude Opus to check your to-do list in OpenClaw.

This tension isn’t really about the pricing of AI models—it’s about the value of human attention. Now that you have a cheaper alternative for many tasks that used to require it, you need to figure out the optimal way to deploy AI in a way that frees up your most expensive model—you. Most businesses are getting this balance wrong in both directions: overpaying for AI on simple tasks and underusing it on ones that would free up their best people.

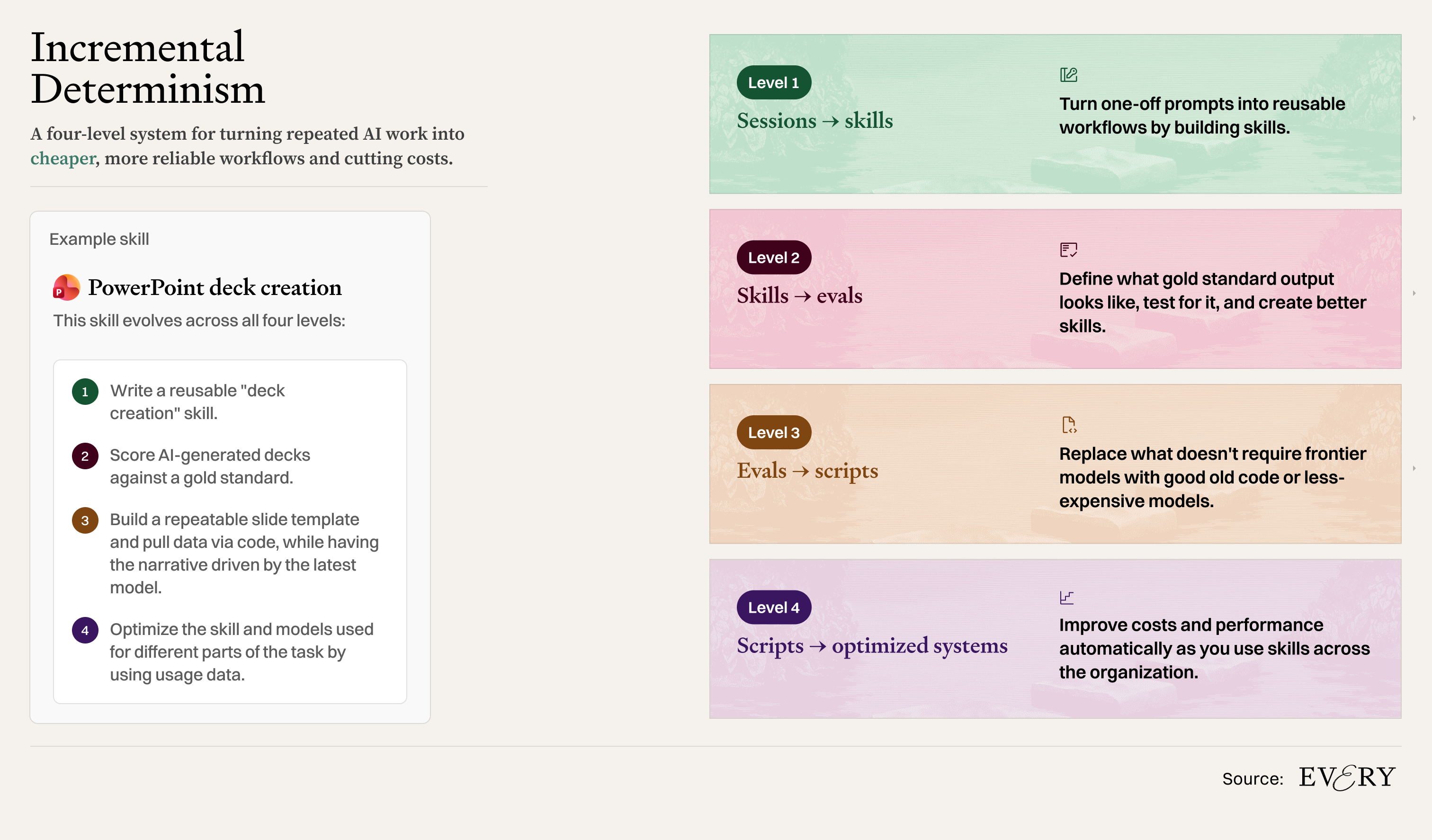

The solution is a process of optimization that I call incremental determinism. Every time you repeat a task, build it into a repeatable process by creating a skill file. Identify which parts of that process need the most expensive model, which can be delegated to cheaper, less powerful models, and which tasks repeat often enough to justify turning them into reusable code. And finally, get better at delegating so you can stay focused on the work that needs you.

I call it incremental determinism because the more you repeat a task, the more it pays to nail down exactly how it should be done. The first time, you figure the task out as you go, but after doing it a few times, you can document the best approach. “Deterministic” is a programming term for code that always produces the same output given the same input. The goal is to push as much of your workflow towards that end of the spectrum as possible, because deterministic steps are faster, cheaper, and more reliable. The tradeoff is the upfront investment needed to systematize the task.

There are four levels for achieving this balance and optimizing AI costs. Depending on your technical fluency, you don’t have to go to the final step, but understanding how they each support each other will help you manage how you can control AI costs across your entire organization.

What comes after your IDE? Intent.

Stop herding AI agents across terminals and branches. Intent bundles each task into a single workspace with a living spec, agent notes, and full change visibility.

Orchestrate agents like a system, not a swarm: Direct specialists, keep work aligned, and ship without copy-pasting context.

Works with Augment, Claude Code, Codex, or OpenCode.

Level 1: Turn sessions into skills

The first level is the easiest. Let’s say you are often asking AI to generate a PowerPoint pitch deck. The first step toward systematizing it is to make a skill. A skill can be as simple as a text file detailing how to do a task that the model follows each time it’s asked. It’s the McDonald’s handbook that tells every employee how to make the perfect burger, over and over again. Even less experienced cooks can get a good result.

Once you’re done with the normal back and forth of giving the AI the necessary data and context for the presentation, ask it, “What information would have been useful to know at the start of this task that would have eliminated several steps or mistakes?” Claude knows what it is capable of, so you can ask it to turn its response into a PowerPoint deck creation skill to use next time. Anthropic has been releasing plugins (collections of skills) for various industries to serve as a starting point. They even provide a “skill-creator” skill that teaches Claude how to guide you through making one when you ask.

Once you have a skill, test it. Ask Claude to test the efficacy of the skill with the following prompt: “Run the task using subagents, one with the skill, one without, and compare the results.” If the skill is doing its job, you should see an improvement in quality, cost, and speed. Now try running it with a cheaper model—“Run this test again with Sonnet/Haiku”—and compare the results. If you’re happy with the output, ask Claude to “Use a subagent with Sonnet/Haiku when calling this skill.” You are using a subagent because you don’t want the model that you are using for your main session—the more expensive one—to be the model executing the task, so the separate, cheaper subagent does the work. You just decreased the cost of running that task by 10 to 100 times.

It doesn’t make sense to write skills for throwaway tasks you won’t do again. But if you find yourself doing something for the third time, it’s probably worth formalizing it. If you’re using it multiple times per week, try getting it working with a smaller model.

Level 2: Turn skills into evals

Your team might see your skill and want to use it to create their presentations as well. While it’s easy to share skills across your organization, you’ll have to get them to trust that your skill delivers before they’ll adopt it. For that, you’ll need evidence in the form of evaluation metrics, or evals.

For the simplest eval, gather 10 examples of tasks your skill has been used for—say, the last 10 decks you have made with the skill—and rewrite the output to be the gold standard or best-in-class example of what you’d hope Claude could produce. Now, ask Claude to “Run each test case with subagents and compare the output versus my gold examples.” Make changes to the skill and test if it does better. This is the “LLM-as-a-judge” technique—you’re using a model to grade its own work against your standard.

In the spirit of incremental determinism, you should formalize your evals over time, too. Ask Claude to “Break down the patterns between what makes a ‘good’ answer (gold examples) versus the typical output of the skill.” It might say that one pattern for a good answer is following brand guidelines, another pattern is including four to five bullet points of commentary on a specific slide, and a third is calculating the correct numbers.

Once you have several evals, you can combine them into a single score. Each eval becomes one “judge”—it looks at the output from one angle, such as data accuracy, and returns a score. You can weight each judge based on how much that dimension matters to you, then average the scores together. This “panel-of-judges” approach lets you track overall quality as a single number. The on-brand eval might be worth 40 points to you, the correct numbers could be 50, and the bullet points worth 10. Each prompt you test can then be scored out of 100, allowing you to compare how well one approach works versus another. Claude is a human-level prompt engineer and runs this process as a matter of course if you use the skill-creator function Anthropic provides.

Let’s come back to our patterns of good output for a PowerPoint deck. Validating the data is more important than whether you’re missing a bullet point or using the right visual components, so you could weight that eval as 60 percent of the overall score versus 20 percent each for the other two. Together, you have a weighted average score for measuring how well your skill is performing. For companies, where getting a pixel out of line is a fireable offence, such as top-tier consulting or finance firms, you can change the relative weighting of that eval.

Now, you have proof you can share with the team about the impact your changes are making on skills. When the next big model comes out, you can test how much better it does on your benchmark and if it’s worth the extra cost.

Level 3: Turn evals into scripts

When your skill is working reliably, and you’re using it frequently enough that the token cost is starting to feel significant, you need to start thinking about scripts, CLIs or MCPs. This is where the steps get slightly more technical, but the principle is the same: Replace thinking with a structured process wherever your thinking doesn’t add anything extra.

Every skill, like your PowerPoint deck skill, is a bundle of actions—pull this data, reference our brand guidelines, create a .pptx file—and some of those actions don’t require a smart model. Some don’t even require an LLM at all. Deconstruct your skill into its component parts and hard-code whatever you can. Code costs almost nothing to run and returns in an instant compared to LLMs, so the more of your workflow you can make deterministic, the cheaper and faster it will be.

For our PowerPoint creation task, you can use the HTML and CSS templates for the slide deck written once by Opus, then filled in to generate the .pptx file when you need to create a deck. You can also write a script to pull the right revenue or sales figures from a data source, no LLM involved. The final export step—to .pptx format—can also be done in code.

For tasks that require some judgment, like checking your deck’s compliance with brand guidelines, don’t jump straight to the most expensive model. Platforms like OpenRouter allow you to call any of the major commercial or open-source models, so you can experiment with the tradeoffs between cost and intelligence. Basic classification and summarization tasks can be done by older models 1,000 times cheaper than Opus with reasonable accuracy. Leave the most challenging tasks, such as the narrative and tailoring the tone to a specific audience, to Opus.

Level 4: Turn scripts into better scripts

In the previous step, you replaced as much LLM thinking as possible with deterministic code, bringing the cost of your PowerPoint skill down 10 to 90 percent compared to only using Opus. But you were only optimizing for your own use. When your skill is running inside a product, creating hundreds of decks a week, cost inefficiencies will again become a problem. For this, you will need to build a process to automate the optimization. Once you have 100 to 200 examples of the skill being used in the real world, a reliable basket of eval metrics, and a clear map of what the skill does at each step, you have everything you need to do so.

The most common tool for this is DSPy, which can automate the prompt engineering process end-to-end. It runs your prompt, looks at the test cases, and rewrites the prompt to arrive at a more accurate outcome, often with a cheaper model. Another common approach is distillation. You use Opus to generate hundreds of high-quality examples that pass your evals, then use those to teach a cheaper model to produce similar results. You can do that by either including the examples in the prompt so Haiku can pattern-match against them, or by fine-tuning the cheaper model directly on the examples. Think of it as a head chef writing such a good recipe that a less experienced cook can follow it perfectly. This process can cost $10, $100, or $1,000, depending on the model and how many test cases you have, but spending $1,000 to save millions in production is worth it.

More experimental approaches are emerging, too. Andrej Karpathy’s autoresearch runs experiments to optimize a script file against an eval metric over long periods. Researchers wake up to more than 20 experiments run overnight with meaningful performance improvements.

The great enemy at this level is overfitting: The skill or script works well against your eval metric but fails on tasks it hasn’t seen before. It’s “teaching to the test” for LLMs. The evals in the previous step are your main defense against this, because they give you a formal rubric for grading its performance. Human involvement in the evaluation process is necessary because we’re better able to catch behavior that goes against the spirit of the game, even if it’s not technically wrong as defined by the rules.

If you are a manager at a company responsible for AI, you don’t need to know how to implement any of this yourself. What matters is understanding that this optimization layer exists, it’s what your technical team or tools are doing under the hood, and why the decision to invest can pay off.

You are the most expensive model

All of this optimization work takes time and expertise, and your attention is an even more expensive commodity than the latest models. Attention is the key word: The ladder of incremental determinism—sessions, skills, evals, scripts, optimized scripts—gives you a framework for deciding where to invest your attention. Every hour you spend optimizing a skill is an hour you’re not spending on something only you can do.

You don’t need to climb the whole ladder—having reliable skills and evals is more than enough. The point is knowing the rungs exist, so when the cost pressure hits (and it will), you know exactly which lever to pull. If you’re struggling with unreliable or expensive skills but don’t have the capability to build scripts in house, it might be time to bring in someone technical and AI-savvy to do the heavy lifting.

The cost of tokens is falling 90 percent every year for the same level of intelligence, so the task even Opus struggles with today might be easy and cheap in 12 months. Sometimes the smartest move is to overpay now and let the market do the price optimization for you.

Mike Taylor is the head of tech consulting at Every and a co-author of Prompt Engineering for Generative AI (O’Reilly). Learn more about how Every’s consulting team can bring AI into your organization.

For sponsorship opportunities, reach out to [email protected]. To read more essays like this, subscribe to Every, and follow us on X at @every and on LinkedIn.

The Only Subscription

You Need to

Stay at the

Edge of AI

The essential toolkit for those shaping the future

"This might be the best value you

can get from an AI subscription."

- Jay S.

Join 100,000+ leaders, builders, and innovators

Email address

Already have an account? Sign in

What is included in a subscription?

Daily insights from AI pioneers + early access to powerful AI tools

.png)

.png)

Comments

Don't have an account? Sign up!

Are subagents (which can specify which model to use for Skills) exclusive to Claude Code or can I specify model with Cowork skills?

@antonacci.michael.d I just tested and it looks like cowork can create subagents but can't load them (you could drop the file in a session and use it that way).