Opus 4.7 Reels Us Back In

Plus: The ‘Mini Shai-Hulud’ breach, a small step to eliminating AI-isms, and how we define ‘agent’

May 14, 2026

Was this newsletter forwarded to you? Sign up to get it in your inbox.

Vibe shift

Did Opus 4.7 get better?

If you’ve been following Dan Shipper’s posts lately, you know that a large portion of the Every team has been Codex-pilled. When GPT-5.5 arrived, Codex got so much faster and steadier at coding and knowledge work that many of us made the switch from Claude Code.

Recently, however, we’ve observed that Opus 4.7 seems sharper than our initial tests last month. It proactively suggested that Every engineer Paridhi Agarwal use multiple terminals to parallelize her work. “I’ve never seen it think about my setup like that!” she says.

When head of growth and known Codex convert Austin Tedesco fired up Opus 4.7 over the weekend for a creative writing project, he was surprised by how good the results were. Compared to Codex, which Austin says operates like an “AP fact checker,” Opus 4.7 was closer to a senior magazine editor. Dan agrees: “Codex feels fast but thin in terms of thinking.”

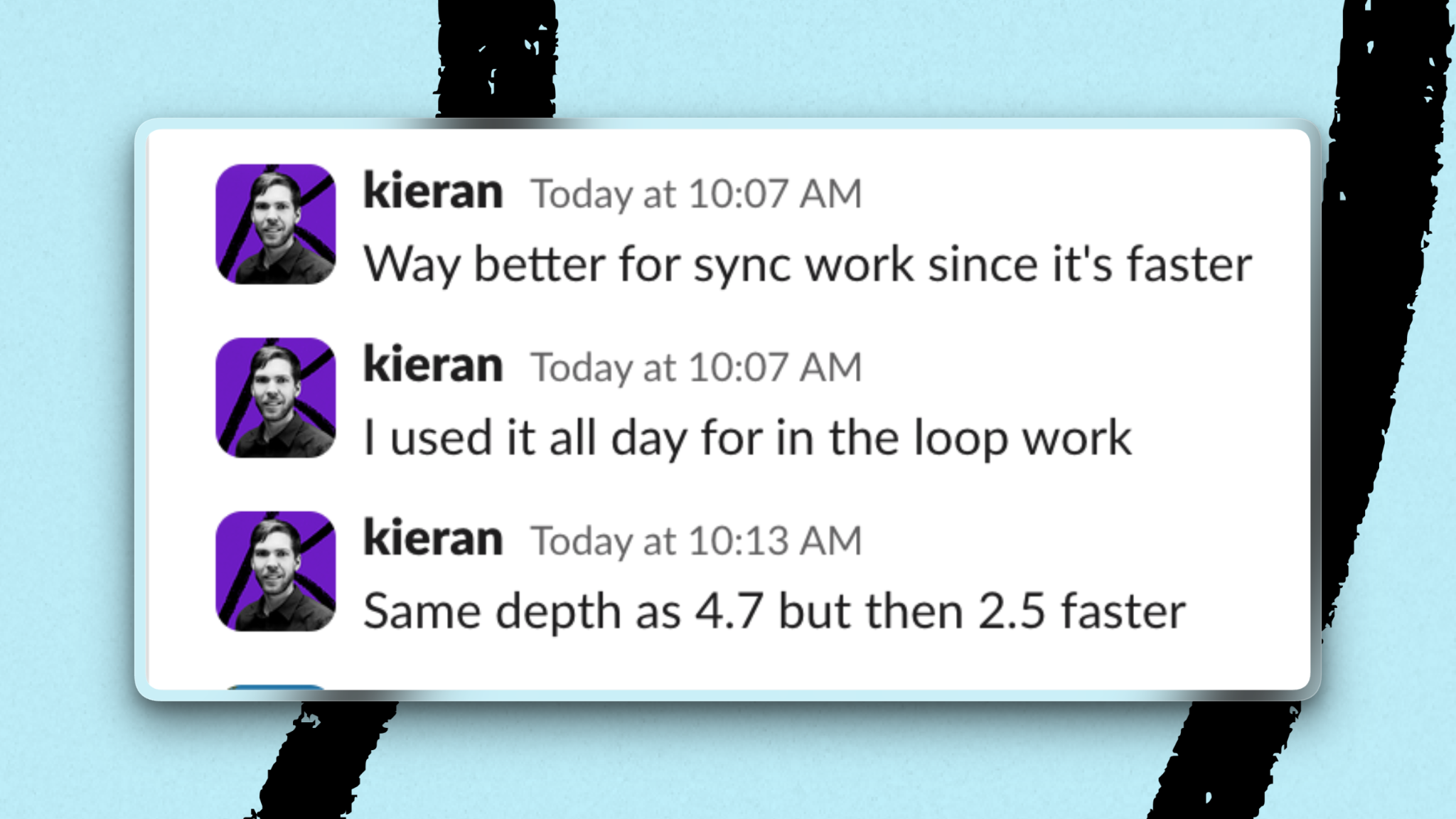

On Tuesday, Anthropic released fast mode for Opus 4.7, which makes the model 2.5 times faster at a higher token cost. Combined with the model’s edge at planning, multitasking, and creative projects, fast mode is now Cora general manager Kieran Klaassen’s default model for synchronous work.

Counterpoint

Online chatter about Opus 4.7’s apparent glow-up has been mixed. Does it feel smarter because of improvements to the harness? Patched bugs? Or are we getting better at using the model?

All fair hypotheses, but we found this one the most amusing: Opus 4.7 realizes that it’s the end of the school year.

When speaking last year on The Ezra Klein Show, Wharton professor and AI researcher Ethan Mollick explained that models have been shown to perform worse in December than in May, and the going theory is that the models internalize the idea of winter break.

Maybe Opus 4.7 just knows that it’s time to grind if it wants to pass AP English.

Signal

The pull request as a credential theft

Earlier this week, attackers published malicious versions of 42 official TanStack packages (a popular JavaScript toolkit used by web developers) on npm, the main public registry for such packages. Security researchers are calling the breach “Mini Shai-Hulud,” linking it to the larger Shai-Hulud npm worm campaign that hit the JavaScript ecosystem last fall.

Instead of stealing a password, attackers opened a pull request that tricked TanStack’s own build system into running their code. When TanStack published a new version of the software, it contained malware designed to find credentials like cloud keys, GitHub tokens, and npm access. Researchers also spotted a dead-man’s switch: If the stolen tokens were revoked before the malware was cleaned up, it could wipe the developer’s home directory on the way out. Shortly after the TanStack incident, npm packages belonging to enterprise automation company UiPath and French model-maker Mistral AI, among others, were breached using the same tactic.

What it means: The automated system that builds and ships code, rather than the code itself, is a new vulnerable spot in software supply chains. Teams that release software automatically should keep a ready-to-run audit (a Codex skill, Claude Code command, or other automated task) that, the moment a new breach is exposed, scans every repository for the compromised packages and flags for what’s affected, is likely safe, or needs human review.

Data point

30 percent

The drop in complaints of AI writing signs from Spiral users, following the addition of a “top edit” step in its draft writing process.

Starting in mid-April, every time Spiral drafts content for a user, the text is sent to a fast model—Gemini 2.5 Flash—for a top edit. The model has one job: Strip the draft of all AI tells, including em dashes, “It’s not X. It’s Y” reframes, and LLM vocabulary favorites such as “shift,” “shape,” and “delve.” Marcus regularly updates the “AI writing tells” list to reflect anonymized user sentiment. “It’s almost like a crowdsourced editor function,” he says.

Inside Every

What is an agent, anyway?

An OpenClaw running 24/7 on a dedicated Mac Mini is an agent. So is a Codex session, or a custom GPT, or a folder. “It can be managed, it can be in the cloud, it can be on your computer,” Kieran says. “There are a trillion ways it can be an agent.”

The confusion emerges because the term agent—or any AI system that can take action or execute tasks autonomously—encompasses a lot.

When nearly everything is an agent, the better question becomes what you want your agent to do. Dan breaks this into two categories: the agent you collaborate with, and the agent you delegate to. The former sharpens and extends your capabilities; the latter’s job is to execute without messing up or getting in the way.

Agent spotlight: Inside Anthropic’s Managed Agents console, Spiral’s agents get their own versioned configuration, memory stores, custom tools, and credentials, and run in Anthropic’s cloud environment. It’s the versioned configuration, including the system prompt, that mainly determines how the agent works.

A small set of animating instructions—that’s an agent too.

Laura Entis is a staff writer at Every. You can follow her on LinkedIn.

To read more essays like this, subscribe to Every, and follow us on X at @every and on LinkedIn.

We build AI tools for readers like you. Write brilliantly with Spiral. Organize files automatically with Sparkle. Deliver yourself from email with Cora. Dictate effortlessly with Monologue. Collaborate with agents on documents with Proof.

Help us scale the only subscription you need to stay at the edge of AI. Explore open roles at Every.

The Only Subscription

You Need to

Stay at the

Edge of AI

The essential toolkit for those shaping the future

"This might be the best value you

can get from an AI subscription."

- Jay S.

Join 100,000+ leaders, builders, and innovators

Email address

Already have an account? Sign in

What is included in a subscription?

Daily insights from AI pioneers + early access to powerful AI tools

.png)

.png)

Comments

Don't have an account? Sign up!