Most people can’t imagine switching away from ChatGPT—it “knows them so well” thanks to its memory feature. Mike Taylor’s view is the opposite: Memory has more disadvantages than advantages. He introduces a concept he calls “context rot,” the slow buildup of stale preferences, errors, and contradictions in an LLM’s memory that quietly degrades your results. His real-life examples are as hilarious as they are insightful—ChatGPT trying to make a basic website feature “as dope as possible” thanks to a Kanye quote in his custom instructions and serving him BBQ rib advice suspiciously tailored to his Hoboken zip code. Sometimes it’s better to forget.—Kate Lee

Was this newsletter forwarded to you? Sign up to get it in your inbox.

Memory is frequently described as ChatGPT’s “killer feature.” Many people tell me they can’t switch to Gemini or Claude because the OpenAI tool “knows them so well.”

I have memory turned off.

The memory feature allows ChatGPT to save and recall information it thinks is important about you, as well as reference past chats to shape its responses. While I can see how this could make a “helpful assistant” more helpful, I don’t use it.

My background is in internet marketing, where it was common to open Google in incognito mode so you didn’t bias your results when checking your client’s ranking. Since Google search results are personalized, your client would show up first if you search from your account. You click on it so much that Google knows you like it. I have the same issue today on Spotify—the algorithm recommends both Rage Against the Machine and the K-Pop Demon Hunters soundtrack, because my six-year-old daughter shares my account.

The argument for turning off memory is the same. I want unbiased results from ChatGPT, based on context that I carefully curated and put in the prompt, so I know how it made its decision. With memory, anything from your past chats could affect the results in ways that are hard to predict.

While the memory feature might be worth the loss of control for most users of ChatGPT, it can lead to unexpected and difficult-to-diagnose problems. Hear me out as I explain the problems you might run into, and hopefully, I’ll convince you to be careful with memory.

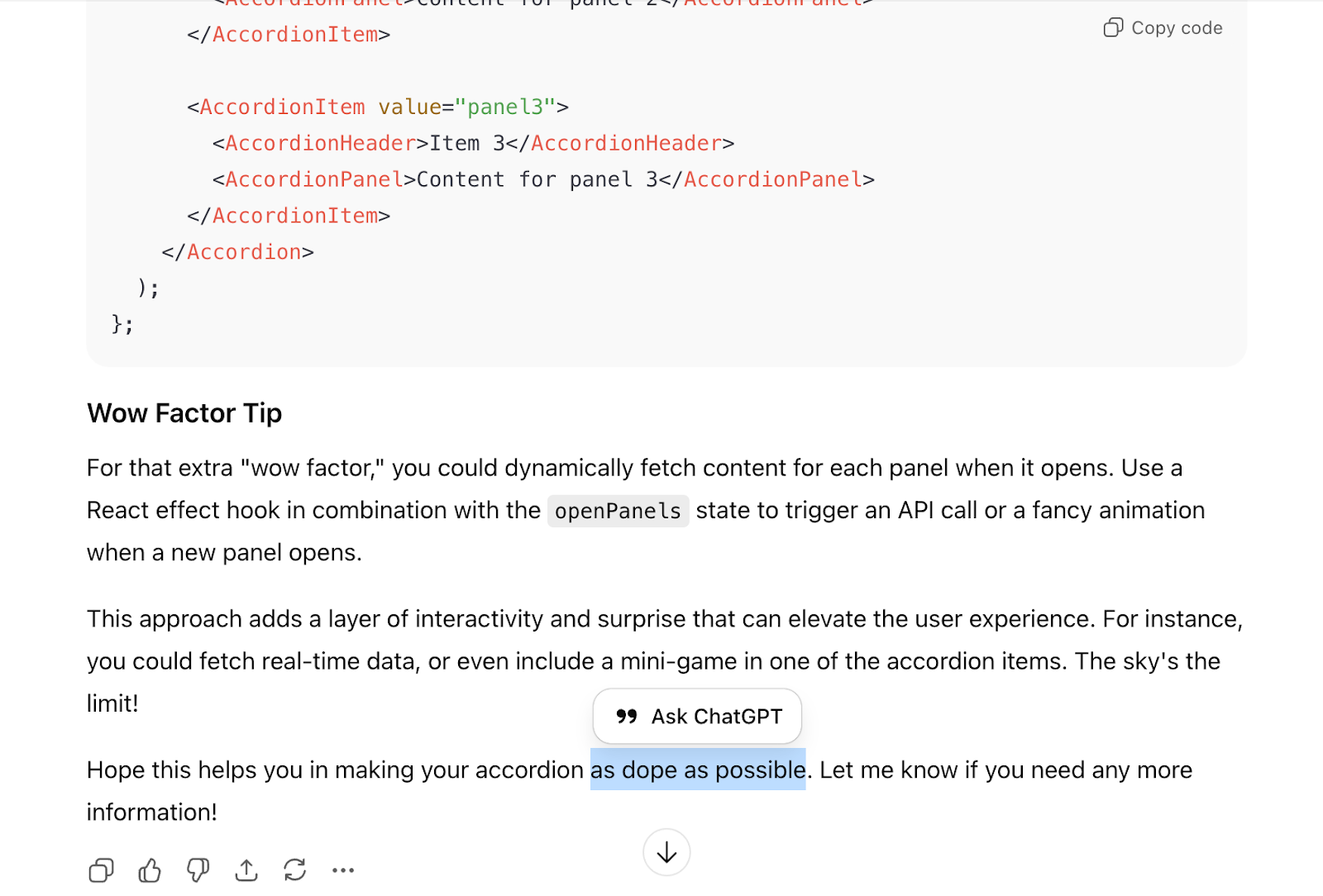

Kanye in context

Before memory was released, I was experimenting with “custom instructions,” which allowed you to tell ChatGPT how you want it to respond. This was a primitive form of memory, simply a text document you could update to craft ChatGPT’s identity toward your personal preferences. Among other things, I had inserted an old (read: pre-meltdown) Kanye West quote that I thought would steer ChatGPT away from its generic responses:

“For me, first of all, dopeness is what I like the most. Dopeness. People who want to make things as dope as possible. And, by default, make money from it. The thing that I like the least are people who only want to make money from things whether they’re dope or not. And especially make money at making things as least dope as possible.”

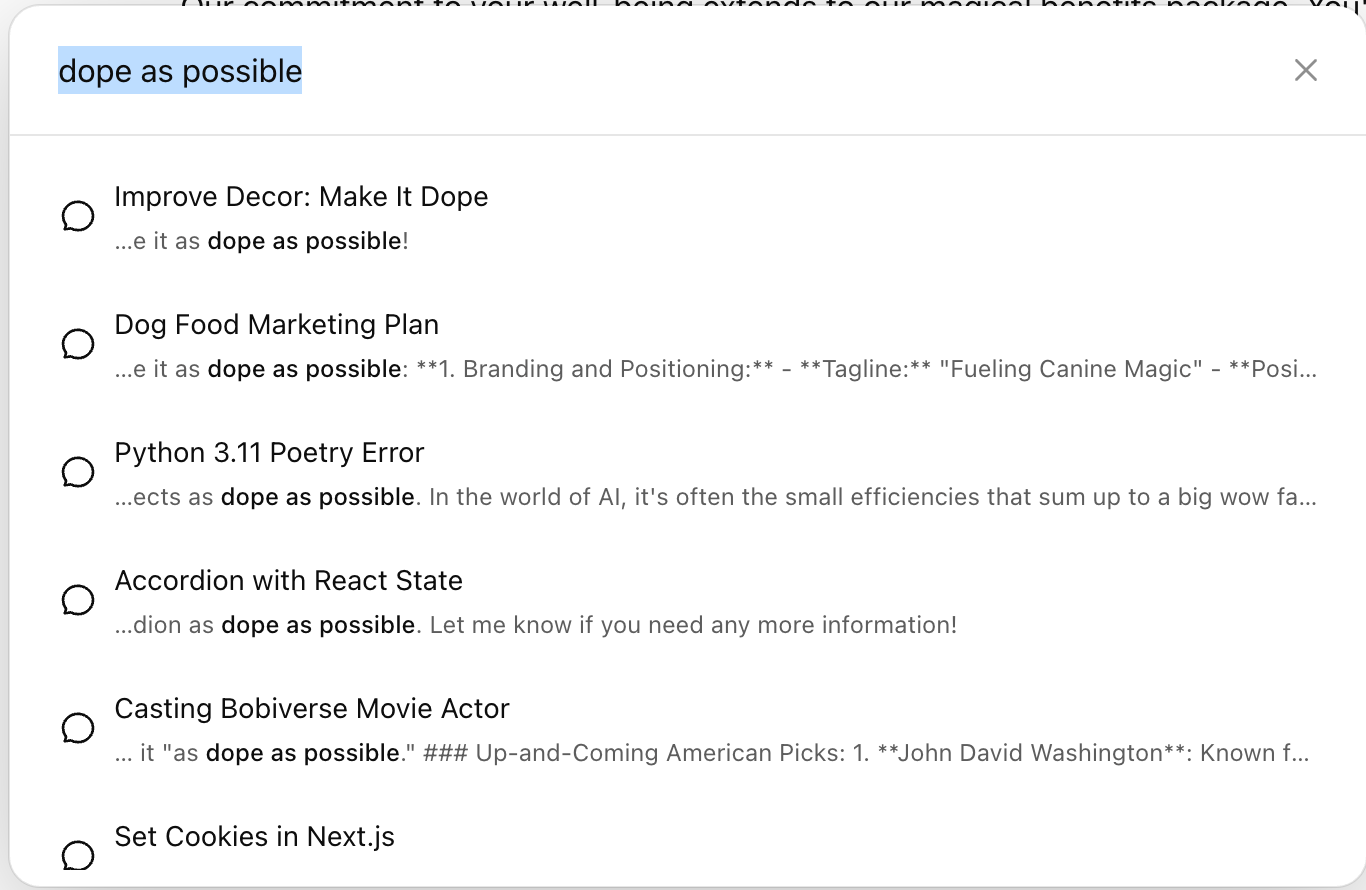

While I can’t fault it for effort, ChatGPT massively over-indexed on this quote and referenced it in basically every chat session. For example, when ChatGPT (this was pre-Codex when we were all just copying and pasting between ChatGPT and our code editors) built a collapsible section on a webpage, it claimed to have made the basic feature “as dope as possible.”

It applied this quote to cases as varied as interior decor (relevant), marketing plans (less relevant), and Python error debugging (irrelevant). Technically, it’s doing what I asked, but a human would be more judicious with how he or she applied these custom instructions.

Even a throwaway line in your context window can have a big impact on the results you get from AI. These models are trained to be extremely eager to please, and so you need to manage the context you provide them, lest they get distracted, confused, or obsessed with what’s in there, degrading your results.

The Kanye example was obviously silly and easy to catch, but sometimes memory issues are more subtle. I turned memory back on while writing this piece and didn’t immediately notice any major issues. Then I asked ChatGPT for help with some barbeque ribs I’m cooking. It came back with “Hoboken Dinner Upgrade Ideas,” recommending Trader Joe’s corn bread mix and “American-dad-core” mac and cheese. Seeing something so ham-fistedly tailored to my life (I just relocated to Hoboken) was disconcerting and mildly annoying.

The Only Subscription

You Need to

Stay at the

Edge of AI

The essential toolkit for those shaping the future

"This might be the best value you

can get from an AI subscription."

- Jay S.

Join 100,000+ leaders, builders, and innovators

Email address

Already have an account? Sign in

What is included in a subscription?

Daily insights from AI pioneers + early access to powerful AI tools

Comments

Don't have an account? Sign up!

Game changer.

Over the past few weeks — maybe even the last month — I’ve felt a real shift in how ChatGPT responds. Things started to feel… mushy. Blended. Like context from one part of my life was bleeding into everything else.

During that same time, my mom passed. I’m processing grief, of course. But I’m also building some very big things. I use ChatGPT constantly throughout the day, across multiple rooms and contexts. Emotional processing, strategic work, creative architecture — very different modes.

What I realized reading this (honestly, just seeing the title) was that memory was flattening those modes together. It was remembering things I didn’t want foregrounded. Positioning me in narratives I wasn’t inhabiting in that moment.

Within minutes, I turned every memory setting off.

Maybe I’ll change my mind. It’s only been 20 minutes. But the relief was immediate.

I’m now much more interested in custom instructions and room-based behavioral context. Stance matters more to me than autobiographical continuity. I’d rather define how the system behaves in a space than have it infer who I am across time.

This came at exactly the right moment. Thank you for articulating something I didn’t fully have language for yet.