Sponsor Every

Want to reach AI early-adopters? Every is the premier place for 80,000+ founders, operators, and investors to read about business, AI, and personal development.

We're currently accepting sponsors for Q3! If you're interested in reaching our audience, learn more:

1

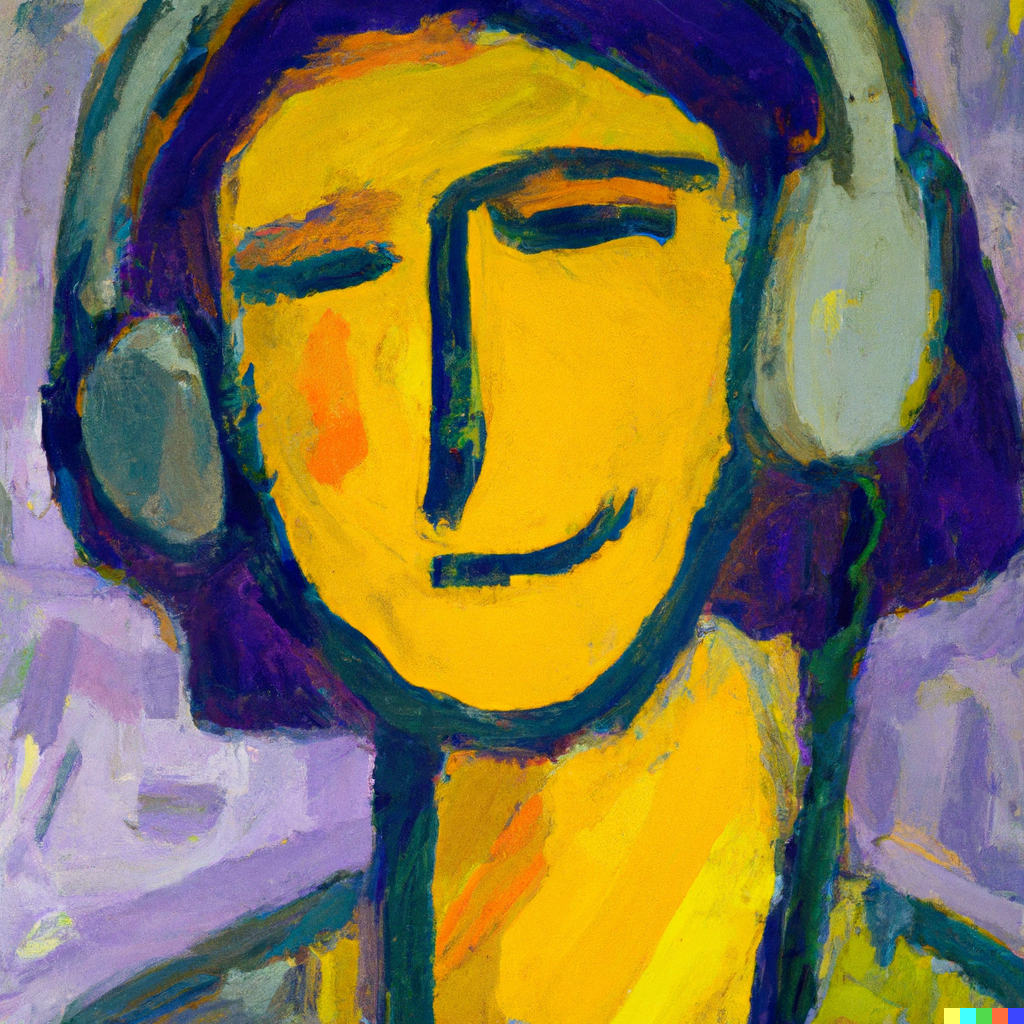

I’m writing this at a busy cafe, but I can’t hear anything except my music, thanks to the algorithms that power my noise-canceling headphones.

When I turn my attention towards Twitter, it’s not so easy to tune out the noise. For each interesting tweet I see, my feed shows me roughly three vapid threads, two reposted TikToks, and maybe one semi-funny meme. Ok, sometimes they’re pretty good:

But still, when I see too much content like this, I start to feel bad in a way that is hard to explain. I call it “viral malaise”—that feeling when you’ve been exposed to too much viral content and your brain feels numb and slightly sad. Perhaps you can relate.

It makes me wonder: thanks to LLMs like GPT-4, is it now possible to build tools that can effectively de-noise our information diets, the same way AirPods can silence the noise in our physical surroundings? How would they work? How would they make us feel? What first- and second-order consequences would widespread use of these tools have on culture, media, and politics?

I’m convinced AI noise filters are coming, even if social media companies are resistant at first. They will be effective, and they could have as large an impact on society as social media itself. Here’s why.

2

The spark that got me excited about this idea was, ironically, a tweet:

Courtland built this bot using GPT-4 to help him moderate the discussions on a community for entrepreneurs he runs called Indie Hackers. Apparently there was a huge increase in quality when he switched from smaller models to more powerful ones:

This might not seem like much, but to me it’s a big deal. Today most algorithms that recommend or suppress content act purely on the basis of inferred popularity. They look at how much time people spend engaging with a piece of content, and boost it to more people if the numbers look good. The content itself is almost purely a black box. Some algorithms try to classify content with tags like “food” or “funny meme” so that it can boost more of the same type of content you’ve engaged with in the past, but it has nowhere near the level of semantic understanding as an LLM does. If you want to change the algorithm, you have to train it all over again, which is time-consuming and expensive.

With LLM-based content filtering and sorting, the underlying model is completely agnostic about what content to promote or suppress. It is a general-purpose reasoning engine. If you want to change its behavior, you don’t need to train a new model. All you need to do is update the prompt.

The consequences of this are subtle, but incredibly important. For the first time, each user could have their own recommendation algorithm, because the “algorithm” is really just a prompt to an LLM. Even better, these prompts are written in natural language, so users can understand the prompt and update it however they want, without the need for any special training.

The Only Subscription

You Need to

Stay at the

Edge of AI

The essential toolkit for those shaping the future

"This might be the best value you

can get from an AI subscription."

- Jay S.

Join 100,000+ leaders, builders, and innovators

Email address

Already have an account? Sign in

What is included in a subscription?

Daily insights from AI pioneers + early access to powerful AI tools

Comments

Don't have an account? Sign up!

Good thoughts , the founders of Instagram are working on a news site that personalizes content for each user based on their text, recommendation engines have been around for at least a decade. What changes with LLM’s is the underlying tech getting more precise. We are working on a similar tool and I can tell you that thanks tj the advanced semantic understanding of the content , LLM’s make training a recommendation engine much easier, that’s true. For the rest, training and curating content is nothing new.

Take recombee for example , clicking on a couple of articles will drastically improve the results for any user LLM or not. All in l, apart from the input, nothing really new under the Sun. (Just like for search…) people are excited about being able to get links from chatgpt while we have been searching the web for 30’yesrs now…. Go figure

Unless we learn from the mistakes of Internet 1.0 and bake incentives and disincentives (aka inter-network/actor settlements) we'll just continue down the same path. It behooves the author and others to learn just how the "permissionless" internet/web had its economic foundations rooted in the competitive, permissioned WAN voice world.

Can't wait for my AI content filter! As to the downsides, aren't Filter Bubbles rather speculative still? https://www.researchgate.net/publication/350174545_A_critical_review_of_filter_bubbles_and_a_comparison_with_selective_exposure