The Fallacy of the 16-hour Agent

Plus: Perplexity’s rules for agent skills, the office politics of dictation, and creating a weekend AI piano coach

May 12, 2026 · Updated June 4, 2026

New data on long-horizon AI reliability just dropped, and depending on which chart you saw, you either think autonomous AI has arrived or it’s still years away. Today, we break down which version of the research to trust, plus Perplexity shares its methodology for building agent skills that don’t rot in production, Every CEO Dan Shipper turns his piano keyboard into a real-time Codex-powered music coach, and Gusto co-founder Edward Kim warns that the office of the future is going to sound more like a sales floor.—Kate Lee

Was this newsletter forwarded to you? Sign up to get it in your inbox.

Signal

The 24/7 agent is nearly upon us—or is it?

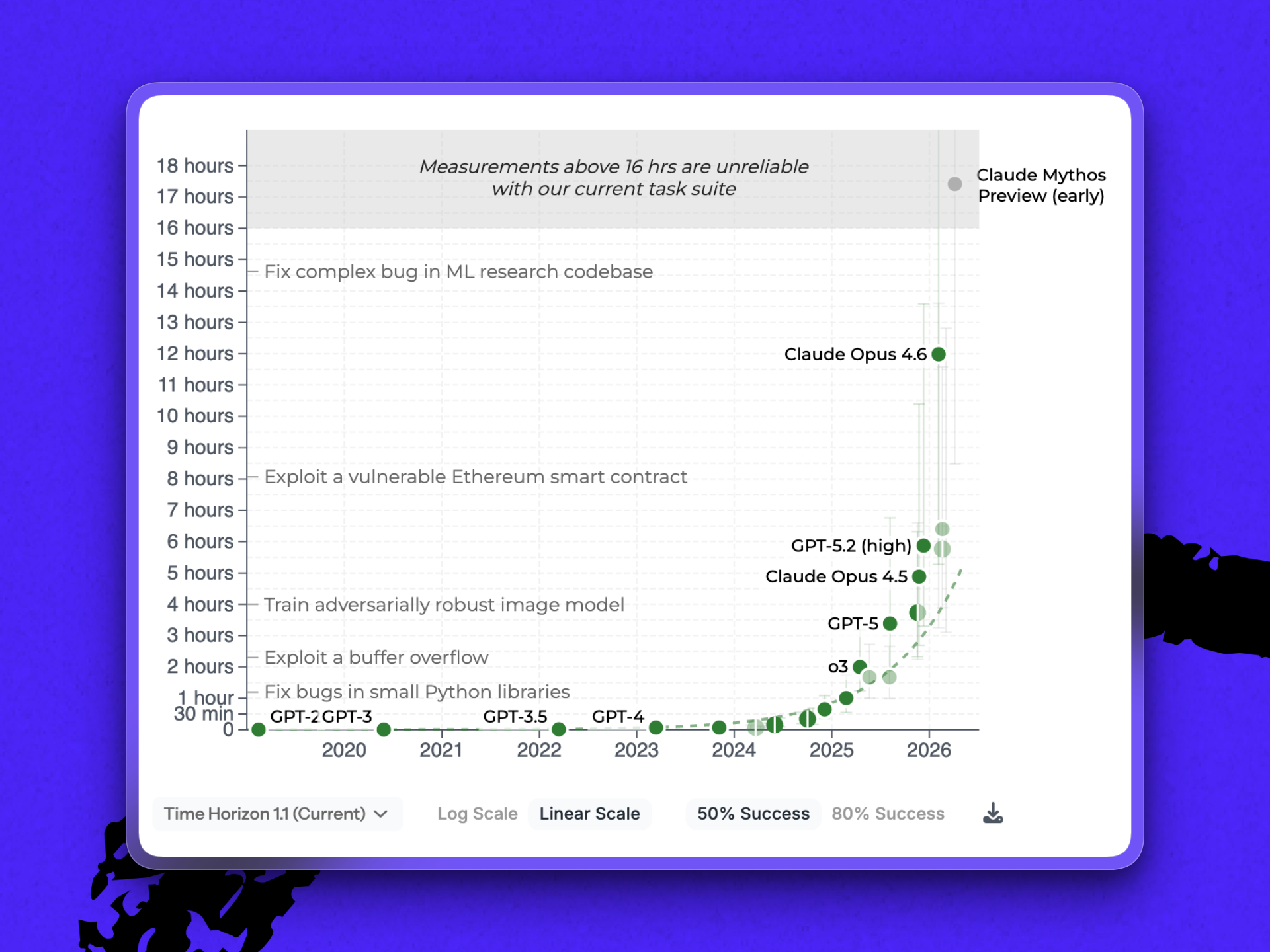

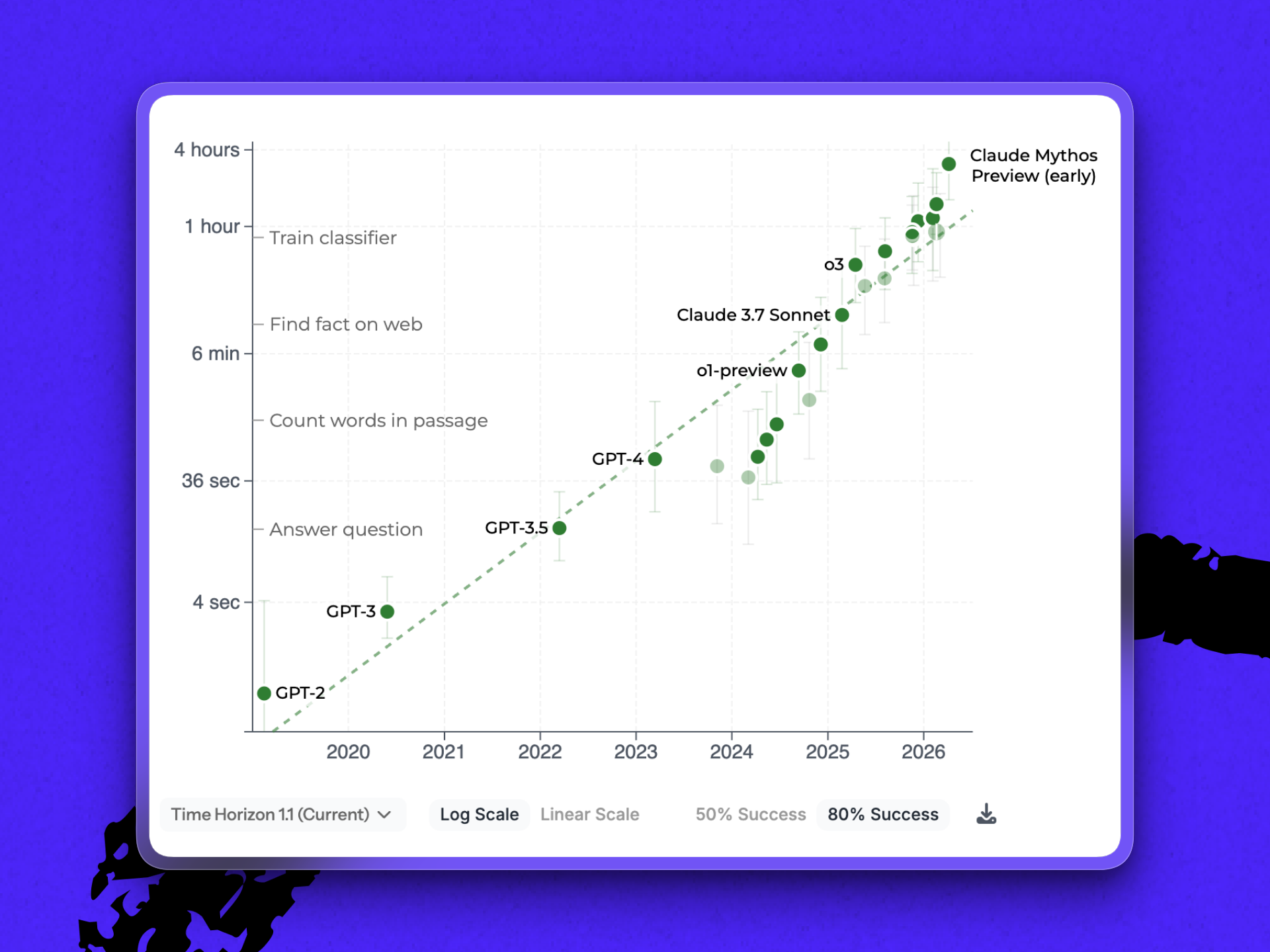

The holy grail of agentic AI has been long-horizon reliability—an agent to which you can hand a task and trust to still be on the right thread hours later, when context has decayed and there’s no human in the loop to catch a wrong turn. METR, a nonprofit that measures AI capabilities, released an update to its research showing how close we are to that autonomous future.

One chart from the update circulating online shows an early preview of Anthropic’s next model, Mythos, blowing past existing models and the 16-hour range that METR’s benchmark suite can reliably test—literally breaking the scale.

It’s important to note, however, that how many human hours a task takes is not the same as how long a model takes to run those same tasks. Duration, the way that METR’s benchmark uses it, stands in for difficulty. As the nonprofit writes in the report’s FAQ: “AI agents are typically several times faster than humans on tasks they complete successfully.”

That last bit—tasks completed successfully—adds another twist to the benchmark. The 16-plus hour measurement is based on a 50 percent success rate. A separate measurement of how LLMs perform at 80 percent reliability shows that Mythos can run tasks that would take humans a little over three hours. It’s a significant step up from the closest competitor measured, Gemini 3.1 Pro (METR doesn’t currently have measurements for Opus 4.7 or GPT-5.5). But it brings Mythos back down to earth.

Both these things are true: Duration can be a useful proxy for difficulty, and benchmarks don’t reflect reality. “[They] don’t measure model capability alone,” says Dan. “They measure model capability after a human has done the work of finding a prompt that lets the model’s capability appear.”

What to do this week:

1. Figure out your longest agent run. METR teaches us that duration might be a good approximation of difficulty. Ask: What’s the longest stretch you’ve trusted an agent on autopilot? If you don’t know, you can’t extend it.

2. Extend your agent’s runtime by giving it a goal. Last month, OpenAI shipped a new /goals command in Codex that allows agents to pursue objectives across multiple turns without checking in. Yesterday, Anthropic introduced a similar command to the latest Claude Code version. Both are apt for long-running loops with clear criteria for success—and very much in line with what we’ve heard from Claude’s platform team. Try it out today.

3. Audit the effectiveness of your existing loops. If you already have agents running overnight, “How long did your agent run?” is still a useful diagnostic—but ask it alongside, “With what guardrails, against what feedback signal, and at what verified accuracy?”

Steal this workflow

Build your next agent skill like Perplexity does

Creating a skill these days is relatively easy. Creating one that keeps working is not. We’ve seen skills that were running fine one day suddenly fire on the wrong request, fail to load when needed, or yield reports that weren’t as useful as they used to be. So the skill files get patched, growing longer every time the agent makes a mistake. But nobody can tell whether the latest edit helped or hurt.

Perplexity, the AI search company building agentic research and browsing tools, recently published its methodology for designing agent skills. The main lesson: Instead of starting with the skill, start the tests. Highlights from the post:

- Write the evals first. Pull five to 10 cases from production queries, known failures, and edge cases. Include negative examples—queries that should not invoke this skill.

- Phrase triggers like a human would. Start with, “Load when…” and use the language your users use. Perplexity’s example: Instead of “monitors pull requests,” try “babysit a PR,” “watch CI,” or “make sure this lands.” This way, the skill loads without your team having to use a specific command or technical phrase.

- Write the body in principles, not procedures. The model already knows commands; it needs direction on how to apply them. Instead of listing detailed steps to, say, checkout a new code branch, then cherry-pick files to edit, then check for conflicts, and so on, Perplexity recommends instructions like, “Cherry-pick the commit onto a clean branch. Resolve conflicts preserving intent.”

- Codify failures into lessons. When the agent fails in production, write the failure mode to the skill file. The mistake becomes a standing instruction that guards against future mistakes.

- Edit instructions rigorously. Ask with every line you add: “Would the agent get this wrong without this?” If not, cut it. Every extra line adds context cost.

Try it this week: Pick one skill your team wants to improve. Write 10 test cases—five it should handle, five it should refuse or route elsewhere. Run the current skill against them. The gap is your backlog.

Discuss

“The office of the future will sound more like a sales floor.”—Edward Kim, cofounder of Gusto, in the Wall Street Journal

A Wall Street Journal article this week about AI dictation tools entering the workplace treats verbal prompting and composition as a manners problem—an angle that shows that the more things change, the more they stay the same.

Every new work interface eventually creates etiquette. Email created reply-all politics. Slack created notification politics. Voice AI is about to create room-tone politics: when you can talk to your computer, how loudly, and around whom. Great news for nosy office neighbors, but for the rest of us, it’s one more reason to curse the invention of open floor plans.

Inside Every

This week, Thinking Machines Lab and OpenAI both announced bets on the same future: AI that watches and responds in real time, instead of waiting for its turn. OpenAI shipped its Realtime-2 voice models; Thinking Machines previewed an interaction model that watches video and audio simultaneously.

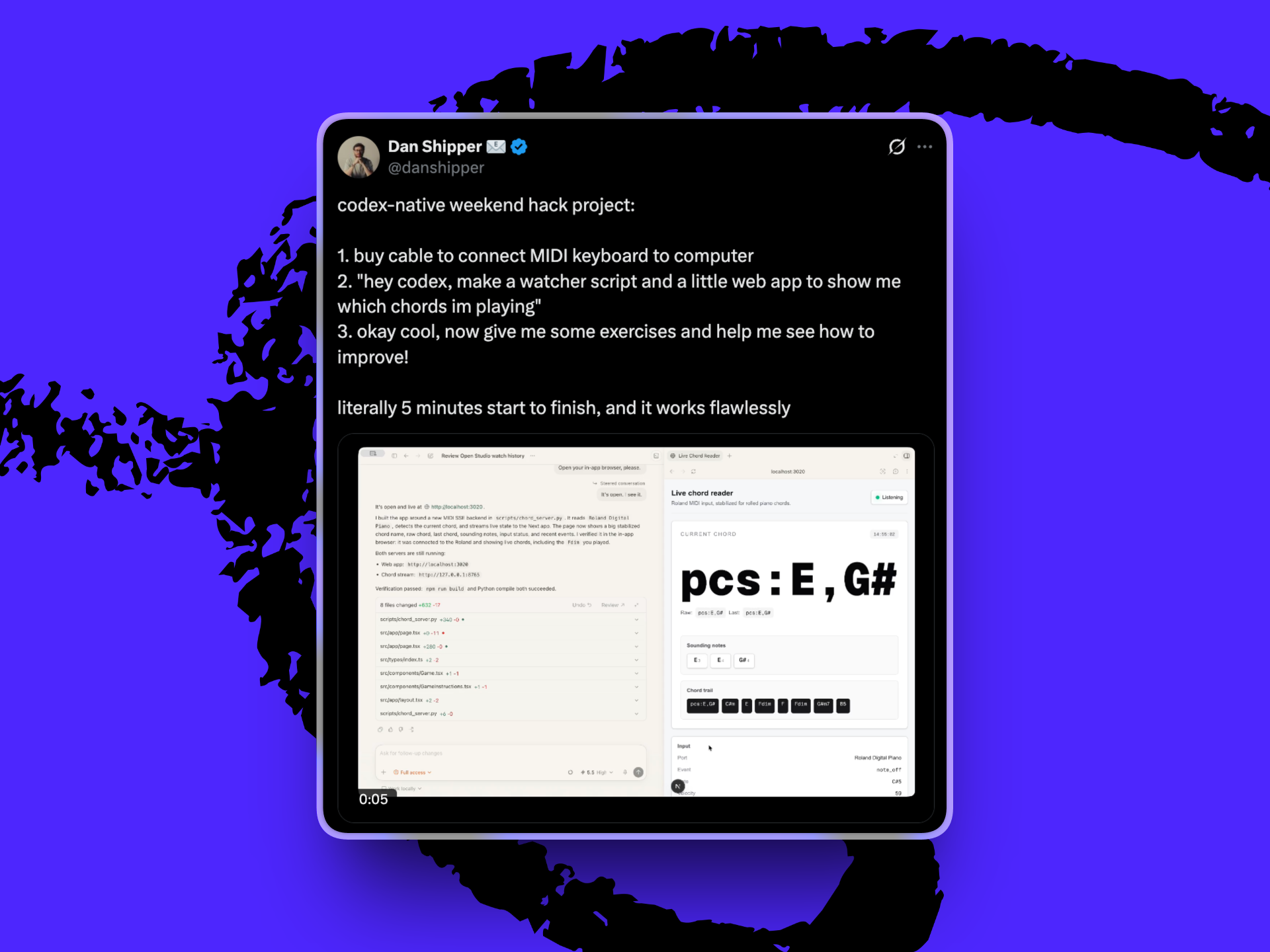

While we’re all waiting to see how the labs’ visions roll, Dan used Codex to jerry-rig his own version.

On Saturday, he plugged his MIDI keyboard—a keyboard that translates notes into data a computer can read—into his laptop, opened Codex, and asked it to build a piano app that would identify the chord he played—then keep watching and coach him as he practiced. The pattern generalizes to any live medium: writing in a document, drawing on a tablet, deadlifting in front of a phone. This is also the promise of hardware like Meta’s AR/VR glasses or Apple’s Vision Pro: AI that sees what you’re doing and responds in a way that’s useful.

Here’s how you can do it too:

- Find the input pipe. MIDI for instruments. Screen capture for writing or design. Camera plus a vision model for drawing or movement. Microphone for languages.

- Have the agent build the watcher. Ask Codex (or Claude Code) to write the app based on how you like to be coached. (For example, tell it to only provide one piece of feedback at a time, or to focus on one aspect of your technique and ignore another.)

- Tune the feedback as you go. First responses will be generic (“good chord progression”). Tell the watcher what’s useful and what’s not—“flag wrong notes only,” “ignore dynamics,” “let me finish a phrase before cutting in.”

Try it this week: Pick a skill you want to get better at. Open the medium where you practice. Spend an evening with your coding agent building the smallest watcher you can—input in, feedback out. Next thing you know, you’ll have a tutor you can summon on demand.

Katie Parrott is a staff writer at Every. You can read more of her work in her newsletter.

To read more essays like this, subscribe to Every, and follow us on X at @every and on LinkedIn.

We build AI tools for readers like you. Write brilliantly with Spiral. Organize files automatically with Sparkle. Deliver yourself from email with Cora. Dictate effortlessly with Monologue. Collaborate with agents on documents with Proof.

Help us scale the only subscription you need to stay at the edge of AI. Explore open roles at Every.

The Only Subscription

You Need to

Stay at the

Edge of AI

The essential toolkit for those shaping the future

"This might be the best value you

can get from an AI subscription."

- Jay S.

Join 100,000+ leaders, builders, and innovators

Email address

Already have an account? Sign in

What is included in a subscription?

Daily insights from AI pioneers + early access to powerful AI tools

.png)

.png)

.png)

Comments

Don't have an account? Sign up!