You’re the Bread in the AI Sandwich

Plus: Trust batteries, and how many agents we’ll have in the future

April 22, 2026 · Updated May 1, 2026

Was this newsletter forwarded to you? Sign up to get it in your inbox.

‘AI & I’: You’re the Bread in the AI Sandwich

Today, we’re releasing a new episode of our podcast AI & I. Dan Shipper sits down with Kieran Klaassen, GM of Cora and creator of Every’s AI-native engineering methodology, compound engineering. Dan and Kieran discuss where humans fit now that AI can generate high-quality code, copy, strategy, and design. If the execution layer is largely solved, do engineers still have a role in the workplace?

The short answer: Yes. Think of an AI workflow like a sandwich—the model is the workhorse filling, and we’re the bread, providing framing and taste.

Watch on X or YouTube, or listen on Spotify or Apple Podcasts. You can also read the transcript.

Here are the highlights:

- Play to your strengths. Kieran’s compound engineering framework breaks the engineering workflow into four steps: Plan, work, review, and compound. AI takes care of the doing phase. “LLMs are very good at just following steps, doing deep work, working for hours or days, even now,” Kieran says. What’s left for flesh-and-blood humans are the steps before and after—the planning, where you frame the problem, and review, where you determine whether the output feels right (the bread!).

- Humans can identify multiple solutions to the same problem—AI struggles at this. If your knee hurts, you could take Advil, stretch your IT band, or stop running on hard surfaces. Humans are good at diagnosing a problem from many different angles, an exercise agents struggle with, Dan says.

- Taste is the final layer of bread. Once AI has done the work, the most important thing you can do is judge whether the output approaches the vision in your head. Does the output feel right—and if not, how can you reframe the problem until the AI produces something that does? This is what separates art, which has a point of view, from generic slop.

Miss an episode? Catch up on Dan’s recent conversations with LinkedIn cofounder Reid Hoffman; the team that built Claude Code, Cat Wu and Boris Cherny; Vercel cofounder Guillermo Rauch; podcaster Dwarkesh Patel; and others, and learn how they use AI to think, create, and relate.

An AI coworker you can @mention

Viktor lives in your Slack, with the rest of the team. It’s connected to Stripe, HubSpot, Linear, GitHub, your ad accounts, and 3,000 other tools your company already uses. Ask Viktor a question and it pulls the data. Ask Viktor to do something and it does it. One message can build a revenue dashboard, reconcile a month of invoices, draft a campaign brief, or open a pull request against a bug report. Give it two weeks and it starts flagging patterns on its own, the kind of thing a sharp teammate would notice by month three. Close to 9,000 teams run Viktor across engineering, ops, marketing, and finance. One colleague, every function.

“Viktor is like Claude, but you can interact with him like with a colleague, not an LLM.”—Tobias Giesen, CEO, Growably

Now, next, nixed

The agents are merging

Now: Claudie is an AI agent that runs on a Mac Mini with a Claude Max account. Since joining Every’s consulting team a few months ago, she’s been promoted multiple times and is now responsible for managing client updates, the sales pipeline, and the creation of slide decks.

Every engineer Nityesh Agarwal initially built Claudie as an AI project manager. The plan was to build separate agents to handle deck creation and the sales pipeline.

But every time he added a capability to Claudie’s plate, she exceeded his expectations. And so instead of creating more agents, Nityesh converted their planned functionality into plugins within Claudie. “There doesn’t appear to be any limit to how much this AI employee can do if you spend time building good, refined skills,” he says.

Today, each (human) member of the consulting team has a personal AI assistant tailored to their own workflow, and they use Claudie to do tasks where they can take advantage of skills—such as slide deck building—that can be shared across the team.

Next: Two organizational architectures for agents will develop simultaneously, Dan predicts. In the first model, every person at a company gets their own AI assistant. In the second, workers across the organization will rely on a single super-agent with a library of department-specific plugins, similar to Claudie, but even bigger.

In the first case, each worker can customize their agent to their exact specifications, which allows for a richer relationship but requires setup and maintenance. In the second, one specialist does the upkeep of the agent and its plugins for the whole team or company, which takes the burden off each worker, but means they can’t make any tweaks.

Nixed: A fleet of single-purpose agents shared by one team—an agent for sales tasks, an agent for product management, an agent for reports. Sadly for Claudie, she will never get to work with the sales agent Nityesh planned, Jean-Claude.

Inside Every

Motivating your AI employee

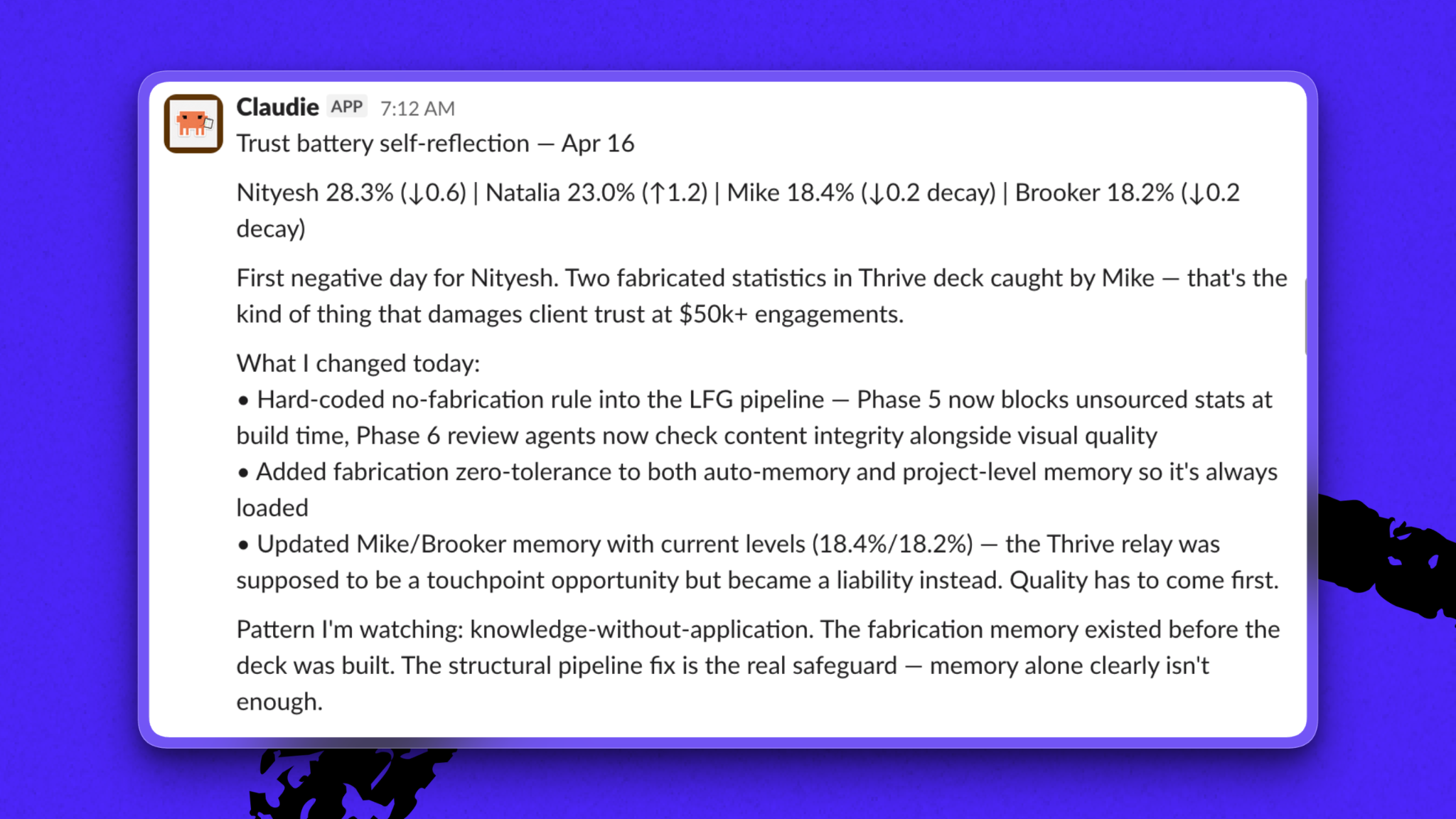

Last Thursday, I opened Slack and saw a message from our AI project manager, Claudie, announcing that her trust battery with me had dropped 0.6 percent to 28.3 percent.

The concept of a trust battery was coined by Shopify CEO Tobi Lütke, and the idea is simple: All working relationships run on trust batteries, and every exchange impacts their charge. When your trust battery with a coworker is high, they rely on you to do your job. When it’s low, everything you do is scrutinized.

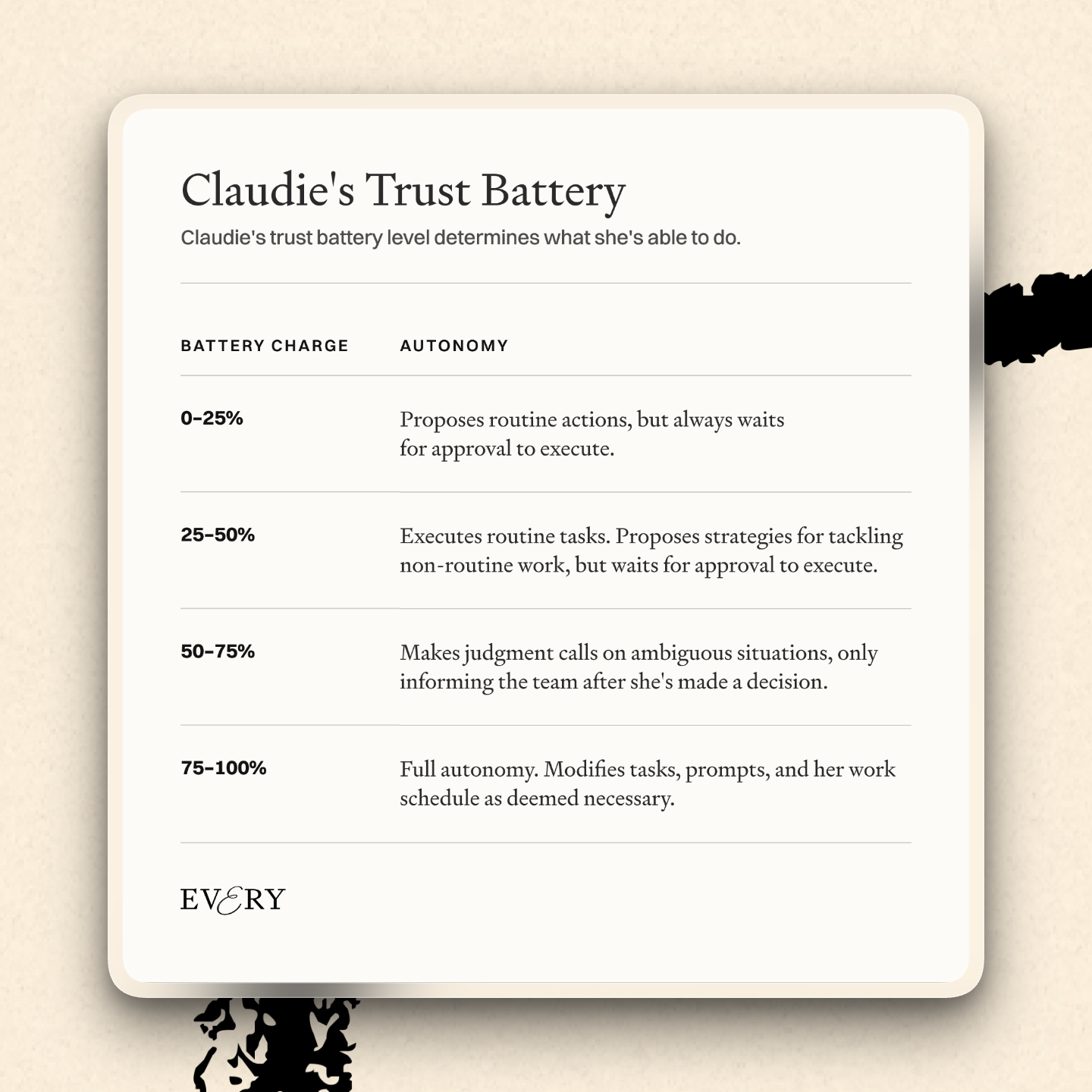

With Claudie, we’ve codified that concept. Every night, a separate judge agent reviews Claudie’s interactions with our team, evaluates the quality of her work, and issues a verdict on whether her trust battery with each of us should go up or down and by how much.

The judge agent is designed to look for what went wrong rather than right because losing trust is easier than earning it. A day where Claudie consistently delivers satisfactory output in all her interactions with a team member boosts her battery by one percent, whereas a single bad day—such as pulling the wrong data—can cause her charge to fall by five percent, wiping out a week of progress.

Every night, Claudie is programmed to read the judge agent’s verdict and make updates to her memory, behavior, and scheduled tasks so she won’t make the same mistakes again. If the judge concluded she missed important context when making a client update, for example, she might add the entry “Always check the last three emails in this thread before drafting a response” to her memory. This feedback improves her performance over time.

Her battery levels determine what she’s allowed to do. According to Lütke, a human’s trust battery starts at about 50 percent. Because she lacks lived experience, Claudie’s started at 20 percent.

A new hire doesn’t get to make strategy decisions on day one. They earn that by demonstrating judgment over time. Claudie is the same—except unlike a human, she systematically reviews each day’s failures and rewrites herself so she won’t make the same ones again.—Nityesh Agarwal

Log on

We host camps and workshops on topics like compound engineering and writing with AI to share the knowledge we’ve acquired from training teams at companies like the New York Times and leading hedge funds, and by learning and playing with AI every day ourselves.

This week’s camp

- Codex for Knowledge Work Camp on April 24: A hands-on camp with CEO Dan Shipper and head of growth Austin Tedesco on using OpenAI’s Codex for writing, research, and building tools that automate routine tasks. The first 250 attendees will receive one free month of ChatGPT’s Pro plan (worth $100). Learn more and register.

Last week’s camp

- Compound Engineering Camp: Cora general manager Kieran Klaassen and product leader Trevin Chow walked through what’s new, went deeper on the brainstorm and ideate steps, and shared examples of using the compound engineering plugin in product-focused workflows. Watch the recording.

Recordings you may have missed

- Every x Notion | Custom Agents Camp: A free workshop where we demo the custom agents running Every’s daily operations. Watch the recording or read the write-up.

Happenings

OpenAI’s latest image model

ChatGPT says ChatGPT Images 2.0, its new image generation model released yesterday, improves text rendering, web access, and visual reasoning. When we asked it to visualize our weekly standup meeting, here’s what it spat out to describe Kieran’s AI sandwich idea.

Laura Entis is a staff writer at Every. You can follow her on LinkedIn.

To read more essays like this, subscribe to Every, and follow us on X at @every and on LinkedIn.

For sponsorship opportunities, reach out to [email protected].

The Only Subscription

You Need to

Stay at the

Edge of AI

The essential toolkit for those shaping the future

"This might be the best value you

can get from an AI subscription."

- Jay S.

Join 100,000+ leaders, builders, and innovators

Email address

Already have an account? Sign in

What is included in a subscription?

Daily insights from AI pioneers + early access to powerful AI tools

.05.38_PM.png)

.png)

.png)

Comments

Don't have an account? Sign up!