“What will the internet look like when it is populated to a greater extent by soulless material devoid of any real purpose or appeal?” wrote Atlantic senior technology editor Damon Beres this week.

Beres’s concern is that media corporations less scrupulous than his own are beginning to pollute the internet with cheap, machine-synthesized content. He argues we’re headed towards a dystopian future that looks an awful lot like the dead-internet conspiracy theory, which posits that most of the people and content we see in our feeds are fakes—created by an evil AI to manipulate us into buying stuff we don’t need and consuming information that isn’t true so that advertisers can reach us.

On what fact pattern is Beres basing his projections? His article names two recent developments.

The first is BuzzFeed’s announcement that it will use AI to create personalized quizzes (e.g., “Answer 7 Simple Questions and AI Will Write a Song About Your Ideal Soulmate”).

The second is CNET’s experiment using AI to generate SEO-optimized explainer articles, such as “What is an Annual Percentage Yield?,” some of which apparently contained significant inaccuracies and plagiarism.

One writer employed by CNET’s parent company, Red Ventures, wrote an anonymous op-ed that reveals just how deep anxiety over AI-generated text runs:

I wonder about what the future will be like for my children. I wonder if they’ll have the same dreams of being a writer like I did when I was young. I wonder if that job will even be there when they grow up. Twenty years from now, will they cut their teeth on freelancing, learning and developing their style and getting their beat? Or will it all be dried up? Will the door be closed forever, the ladder pulled up behind us, the last writers, our words used to feed the ever-starving algorithm?

Later in the op-ed, the anonymous writer predicts the same dystopian future that Beres did:

Google’s going to be clogged with AI-generated content of dubious accuracy. Will it turn into an endless prism of echoes, as the algorithm scrapes articles from other algorithm-generated articles, over and over again? Will the cultural vernacular be changed when the majority of content we read is filled with the syntax and semantics of a robot?

Is this the endgame for AI-generated content? Has the last writer already been born? Will future generations be forced to wander aimlessly in a junkyard of regurgitated content, desperately searching for morsels of meaning?

Or will the future play out differently? Is there reason for hope?

I believe so. Here’s why.

AI-generated text will be easy to detect

People want to know the source of the information they read. Therefore, watermarking tech* will become a standard feature of LLMs provided by large AI companies that want to keep their reputations intact. Only those on the fringes will attempt to evade this—either as an LLM creator or user. Even the CNETs of the world have realized transparency is in their best interest.

* Side note: the way the watermarking tech works is incredibly cool. LLMs generate one word (technically one “token,” which can be part or all of a word) at a time. Before the algorithm decides what the next token should be, it runs some math on the previous token to deterministically generate a “greenlist” of allowed possible next tokens and a “redlist” of tokens it should not say next. For instance, after the word “for” it might be allowed to say “instance” but not “example.” The more red-listed tokens a text contains, the less likely it is to be generated by AI. You can remove the watermark by intentionally replacing green-listed words with red-listed alternatives, but because the lists are dependent on the words that came before, this is complex and requires substantial revision to the original text.

So what about the marginal players that try to evade detection? I doubt their prowess will be able to match the much bigger players that will invest in AI content detection classifiers, like the one OpenAI released just yesterday. These models aren’t good enough yet, but over time it seems likely that they will be able to tell with reasonable accuracy whether a text was AI-generated or not—even when there is no watermark.

Today we live in the Wild West of AI, but once you can discern AI-generated text 80–90% of the time, anyone who wants to avoid it will be able to do so with a browser extension. I wouldn’t be surprised if Apple builds something like this into their OS. It’ll be like digital privacy—if you care, you can opt out by adopting a few simple tools like ad blockers and VPNs.

The question is: will people care to intentionally evade AI-generated content? Will they have to?

“What will the internet look like when it is populated to a greater extent by soulless material devoid of any real purpose or appeal?” wrote Atlantic senior technology editor Damon Beres this week.

Beres’s concern is that media corporations less scrupulous than his own are beginning to pollute the internet with cheap, machine-synthesized content. He argues we’re headed towards a dystopian future that looks an awful lot like the dead-internet conspiracy theory, which posits that most of the people and content we see in our feeds are fakes—created by an evil AI to manipulate us into buying stuff we don’t need and consuming information that isn’t true so that advertisers can reach us.

On what fact pattern is Beres basing his projections? His article names two recent developments.

The first is BuzzFeed’s announcement that it will use AI to create personalized quizzes (e.g., “Answer 7 Simple Questions and AI Will Write a Song About Your Ideal Soulmate”).

The second is CNET’s experiment using AI to generate SEO-optimized explainer articles, such as “What is an Annual Percentage Yield?,” some of which apparently contained significant inaccuracies and plagiarism.

One writer employed by CNET’s parent company, Red Ventures, wrote an anonymous op-ed that reveals just how deep anxiety over AI-generated text runs:

I wonder about what the future will be like for my children. I wonder if they’ll have the same dreams of being a writer like I did when I was young. I wonder if that job will even be there when they grow up. Twenty years from now, will they cut their teeth on freelancing, learning and developing their style and getting their beat? Or will it all be dried up? Will the door be closed forever, the ladder pulled up behind us, the last writers, our words used to feed the ever-starving algorithm?

Later in the op-ed, the anonymous writer predicts the same dystopian future that Beres did:

Google’s going to be clogged with AI-generated content of dubious accuracy. Will it turn into an endless prism of echoes, as the algorithm scrapes articles from other algorithm-generated articles, over and over again? Will the cultural vernacular be changed when the majority of content we read is filled with the syntax and semantics of a robot?

Is this the endgame for AI-generated content? Has the last writer already been born? Will future generations be forced to wander aimlessly in a junkyard of regurgitated content, desperately searching for morsels of meaning?

Or will the future play out differently? Is there reason for hope?

I believe so. Here’s why.

AI-generated text will be easy to detect

People want to know the source of the information they read. Therefore, watermarking tech* will become a standard feature of LLMs provided by large AI companies that want to keep their reputations intact. Only those on the fringes will attempt to evade this—either as an LLM creator or user. Even the CNETs of the world have realized transparency is in their best interest.

* Side note: the way the watermarking tech works is incredibly cool. LLMs generate one word (technically one “token,” which can be part or all of a word) at a time. Before the algorithm decides what the next token should be, it runs some math on the previous token to deterministically generate a “greenlist” of allowed possible next tokens and a “redlist” of tokens it should not say next. For instance, after the word “for” it might be allowed to say “instance” but not “example.” The more red-listed tokens a text contains, the less likely it is to be generated by AI. You can remove the watermark by intentionally replacing green-listed words with red-listed alternatives, but because the lists are dependent on the words that came before, this is complex and requires substantial revision to the original text.

So what about the marginal players that try to evade detection? I doubt their prowess will be able to match the much bigger players that will invest in AI content detection classifiers, like the one OpenAI released just yesterday. These models aren’t good enough yet, but over time it seems likely that they will be able to tell with reasonable accuracy whether a text was AI-generated or not—even when there is no watermark.

Today we live in the Wild West of AI, but once you can discern AI-generated text 80–90% of the time, anyone who wants to avoid it will be able to do so with a browser extension. I wouldn’t be surprised if Apple builds something like this into their OS. It’ll be like digital privacy—if you care, you can opt out by adopting a few simple tools like ad blockers and VPNs.

The question is: will people care to intentionally evade AI-generated content? Will they have to?

I don’t think so.

AI-generated junk content will fail

I don’t know why anyone thinks it’s possible to “clog” the internet with junk. That’s just… not how it works 😅

What you can do is upload content to a website. As much as you want. And by default it doesn’t affect anyone or anything else. Posting millions of junk articles to the internet is like printing it all out in your house: nobody will notice. In order for it to affect anyone, junk content has to show up somewhere they already go.

So where do people go to find text content? Primarily Google. The business model behind CNET and its peers is to publish articles that are designed to show up first when you search for something like “What does Annual Percentage Yield mean?”

What Beres and the anonymous CNET writer fail to take into account is that AI-generated content completely changes the economics of SEO in a way that is unsustainable and self-cannibalizing. If there used to be 10 articles competing for the top spot on a long-tail search term and now there are 10,000, then where previously 100% of the articles showed up on the first page of results, now only the top 0.1% do. If the 7,258th article is crappy, it doesn’t affect anyone! (Except the company that wasted money creating it.)

What about the AI-generated articles that do manage to make it to the top of search results? Presumably they are at least decent. The only reason they’d show up there is because humans chose to link to them or other pages on the website they live on. Google has every incentive to improve the quality of these results, and you can bet they have teams internally working on down-ranking junky, inaccurate content (machine-generated or otherwise).

Many people feel like Google is getting worse, but that’s primarily because they’re showing so many ads now, and the pages we click on are loaded with ads and filled with mediocre human-generated content.

Which leads us to the next issue that gives me hope for the future…

Mediocre SEO content is dying anyway, and that’s a good thing

This type of content was never about The Craft of Writing™ or Providing Readers With Quality Information™—it was always an arbitrage for media magnates to pay freelance writers a low hourly rate to produce a dozen articles a day and hopefully serve enough pop-ups, banner ads, and affiliate links to turn a profit. Not all content that ranks highly in Google is of this type, but a lot of the content that is moving to machine-generation is.

But isn’t it an obviously losing game in the long run to publish the output of a LLM onto a website and try to rank in Google when people will eventually figure out that they can chat with the LLM directly? You get more concise answers and no ads, and you can even ask follow-up questions!

I don’t think there’s anything wrong with people consulting an AI like ChatGPT instead of searching Google and navigating through a list of links. The links were never that trustworthy or high quality anyway, and ChatGPT won’t hallucinate incorrect information like it does today for much longer. To be clear, ChatGPT and its ilk are not good enough yet. They may never be. But I’d bet money, purely on a hunch, that one day soon they will be. I don’t think they’ll be able to beat human writers who publish personal, thoughtful, voice-y writing. But they will be able to answer basic questions. The impact will be good for the world.

For information seekers, we’ll get a much better and richer understanding of the world in way less time, because looking things up will feel more like chatting with an expert than wandering through a library (the latter is fun, but the former is much faster). For information-providers, instead of churning out SEO content, they’ll be forced to pursue a direct relationship with readers. They will cultivate trust and earn permission to deliver content directly, rather than needing to show up in Google to gain readers. This is a better arrangement for everyone.

Markets won’t solve everything, but they will solve for junk AI content

The mental model for projecting disastrous consequences of AI-generated content is that of the “market failure.” Media companies like CNET are compared to the agriculture companies that dump chemicals into the land and get to reap the benefits of increased yields (and therefore profits) but don’t have to bear any of the larger environmental costs, which get passed onto future generations.

But the pollution scenario I just described is a classic market failure mode: negative externalities. It happens when there is no incentive for buyers or sellers to forgo a transaction if it benefits both of them but harms a third party.

So what is the negative externality with AI-generated content? I don’t think there is one. If a company published content written by AI that is “soulless material devoid of any real purpose or appeal,” it will negatively impact the reader—not an uninvolved third party, as is the case with the pollution example. That means the reader will probably stop trusting that source and go somewhere else for content. Eventually this will sufficiently damage the media company’s reputation and revenue to cause it to either fix the problem, stop using AI, or lose readership.

What if some people prefer the AI-generated content, even though some other people may regard it as soulless or devoid of appeal? You’d think “to each his own” would be a good enough response, but I suspect that goes against the whole point of Beres’s article in the Atlantic: to signal disapproval. Literary commentary is—and always has been—full of debate over what is “quality” and what is “trash.” Saying something that’s popular is bad is an easy way to gain status and attention.

But aside from a bad reading experience, are there any other possible ways that AI generated content could represent a market failure?

Some might argue that AI-generated content creates a negative externality if the AI plagiarizes other writers. If that happens, the writers it plagiarizes are harmed, but the media company and its readers may still benefit and therefore have no incentive to stop transacting—in theory, at least. But I think most readers do feel harmed when they realize an article they read contained plagiarism: they feel lied to. You said you wrote this, I used the quality of it to decide if I want to keep reading your work or not, but you actually did not write it. So although plagiarism is obviously bad, it might not be an externality. This is proven in practice, because almost universally, media companies—including CNET—react quickly when they realize they have published something plagiarized. That’s not what happens in negative externality scenarios. It usually takes a lot of public pressure and even regulation for their behavior to change.

The idea of plagiarism as lying does make me wonder: if I read an article generated by an AI that a writer tried to pass off as their own, should that count as plagiarism? Or, perhaps, “aigiarism”?

If you publish AI-generated content, are you a liar?

It seems like the answer should be a simple “yes” if you try to pass it off AI-generated content as your own. But all writers use various forms of aids. What needs to be disclosed, and what doesn’t? Are ghostwriters a form of plagiarism? They may be willing participants, but it feels weird if the audience doesn’t know. What about editors? They often have a huge impact on a piece of writing, as I can personally attest. What about Grammarly? Spellcheck?

Every week, the words you read by me in this newsletter have gone through an editorial review process. It’s not just straight from my head to your eyes. Sometimes my pieces even contain good lines that were suggested by colleagues. (They always contain 60% fewer adverbs 😅) Do you feel misled?

I would feel weird if I knew a writer tried to pass off an AI-generated article as their own—but I like it when writers get help from editors and use tools like Grammarly to improve their writing. As for ghostwriters, I don’t think anyone feels betrayed when they learn that Prince Harry didn’t write his memoir; it’s understood that writing a book is a specialized skill that most public figures don’t have the time or incentive to learn. It’s hard to predict which norms and mores will emerge around the use of AI-generated text.

The future of writing

I don’t have any doubt that my eight-month-old daughter is going to get to experience all the joys and pains of writing when she grows up. If she wants to pursue it professionally, I’m confident she’ll be able to do so. The job will change over time as the tools evolve, and economics will nudge writers in particular directions, but I have no doubt that people will always want to read things written by humans.

A good analogy is music. At some point in the seventies and eighties computers started to be able to synthesize musical sounds like drums or strings, and eventually those sounds became indistinguishable from human musicians.

Perhaps this has resulted in fewer session musicians, but they’re not entirely gone, and it’s undeniable that more people create music now than ever, using an artful combination of organic and synthesized sounds. The industry has changed, but the art is alive and well.

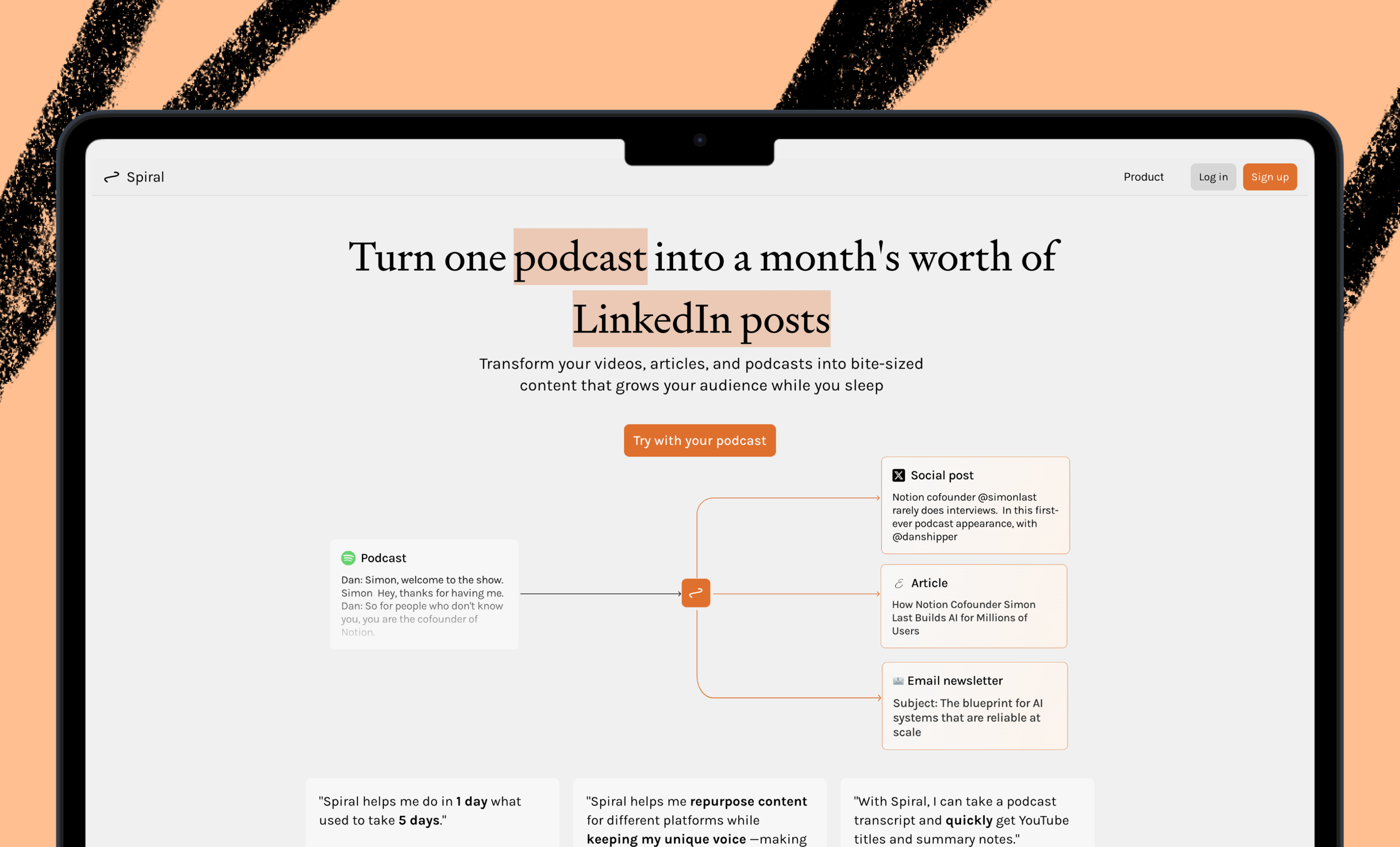

I think something similar can happen with writing. That’s why I am working on Lex: to help everyone become a better writer.

Ideas and Apps to

Thrive in the AI Age

The essential toolkit for those shaping the future

"This might be the best value you

can get from an AI subscription."

- Jay S.

Join 100,000+ leaders, builders, and innovators

Email address

Already have an account? Sign in

What is included in a subscription?

Daily insights from AI pioneers + early access to powerful AI tools

Ideas and Apps to

Thrive in the AI Age

The essential toolkit for those shaping the future

"This might be the best value you

can get from an AI subscription."

- Jay S.

Join 100,000+ leaders, builders, and innovators

Email address

Already have an account? Sign in

What is included in a subscription?

Daily insights from AI pioneers + early access to powerful AI tools

Comments

Don't have an account? Sign up!

As you mentioned, 99% of SEO content is garbage anyway, and would probably be better off if it was written by AI. This is going to put more pressure on search engines to find "the good stuff," at the least. Priority #1 is becoming much more strict about what content gets indexed, and transparent so creators can know how to fix issues. And for quality writers, AI will help them create even better content like you used DALLE to make the cover image.

@samuelszuchan exactly!