It’s pretty rare for a company to transition from a research lab to a developer infrastructure provider to a behemoth consumer app in just a few years. But given the launch of ChatGPT plugins last week, there’s a fair chance this could end up being the story of OpenAI.

Plugins allow ChatGPT to browse the web and interact with services like Kayak and Instacart to perform tasks for users beyond just generating text. The news marks OpenAI’s definitive step out of the land of research and into a vastly ambitious and uncertain new world, competing to earn its place as perhaps the newest tech giant, alongside Google, Microsoft, Apple, and Facebook.

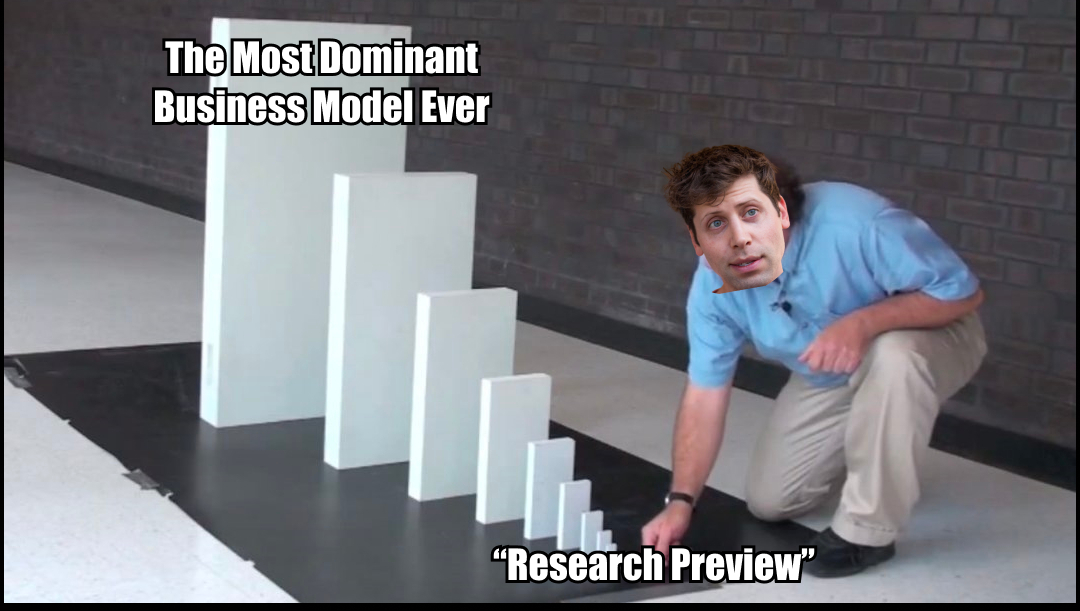

Packy McCormick, never one to shy away from a bold optimistic claim, expressed his prediction for how this will turn out with the following meme:

Even if you’re not as bullish as Packy, it’s clear this is an important moment. My hunch is that soon we will all be using ChatGPT with plugins—or something like it—almost every day, for the foreseeable future. I don’t know who will be the dominant market player, or how the value will get captured, but the product/market fit of this emerging category of AI chat product is very real. It is on the same level of importance as the PC, the web browser, the search engine, and the smartphone. Seriously.

Last week, on the day that ChatGPT plugins were announced, I was at an AI conference hosted by Sequoia in San Francisco where Sam Altman was speaking. The announcement tweet dropped at 10 a.m., and you could practically see the news ripple through the attendees. Did you hear? Have you tried it? Wow, you had the beta? Damn…is it good?

Also, keep in mind that these were not impressionable or easily excited rubes! Many of the smartest investors and CEOs in AI were at this event, and they instantly understood the significance of this announcement.

It’s now possible, perhaps even plausible, that OpenAI considers itself primarily a consumer business. It may have started as a research lab and in recent years evolved into an AI infrastructure provider, but this may not be its final form. Over at Stratechery, Ben Thompson even went so far as to predict that OpenAI should and eventually may shut off all API access to developers, calling it a waste of resources and a distraction.

So—what’s going on? There are so many questions:

- What are ChatGPT plugins?

- Why are they so important?

- Is OpenAI going to become the next tech giant? Spoiler: I’m excited about the product, but slightly less bullish on the business than most.

- Should developers using OpenAI’s APIs be concerned?

It’s obviously a rapidly evolving situation, but I am going to do the best I can to answer all these questions and more. I’ve structured this week’s post as a sort of skimmable FAQ, because I want to cover all the basics even though I know a lot of you already know them. Feel free to skip to the parts that are most interesting to you!

(Maybe next time I’ll just write this essay as an input to a chatbot that you can talk to. 😅)

What are ChatGPT plugins?

In a nutshell, they let ChatGPT perform actions besides just generating text, and they allow ChatGPT to access external information that is not included in its training data.

In the past, developers were able to use OpenAI’s APIs in their own products. Now OpenAI is using developers’ APIs in its product.

So far, OpenAI has built three first-party plugins:

- Web browser: Capable of searching the web, clicking links, and finding current information.

- Code interpreter: Can run Python code and read the output in a sandboxed environment.

- Retrieval: This one is a little different from the first two, in that it’s more of a template that others can use to build their own plugins, rather than a finished plugin of its own. But basically it lets you upload a bunch of text and allows ChatGPT to use that text to answer questions. If you’ve ever seen a project like “chat with a book” or “chat with a newsletter” this is basically a way to do that inside ChatGPT.

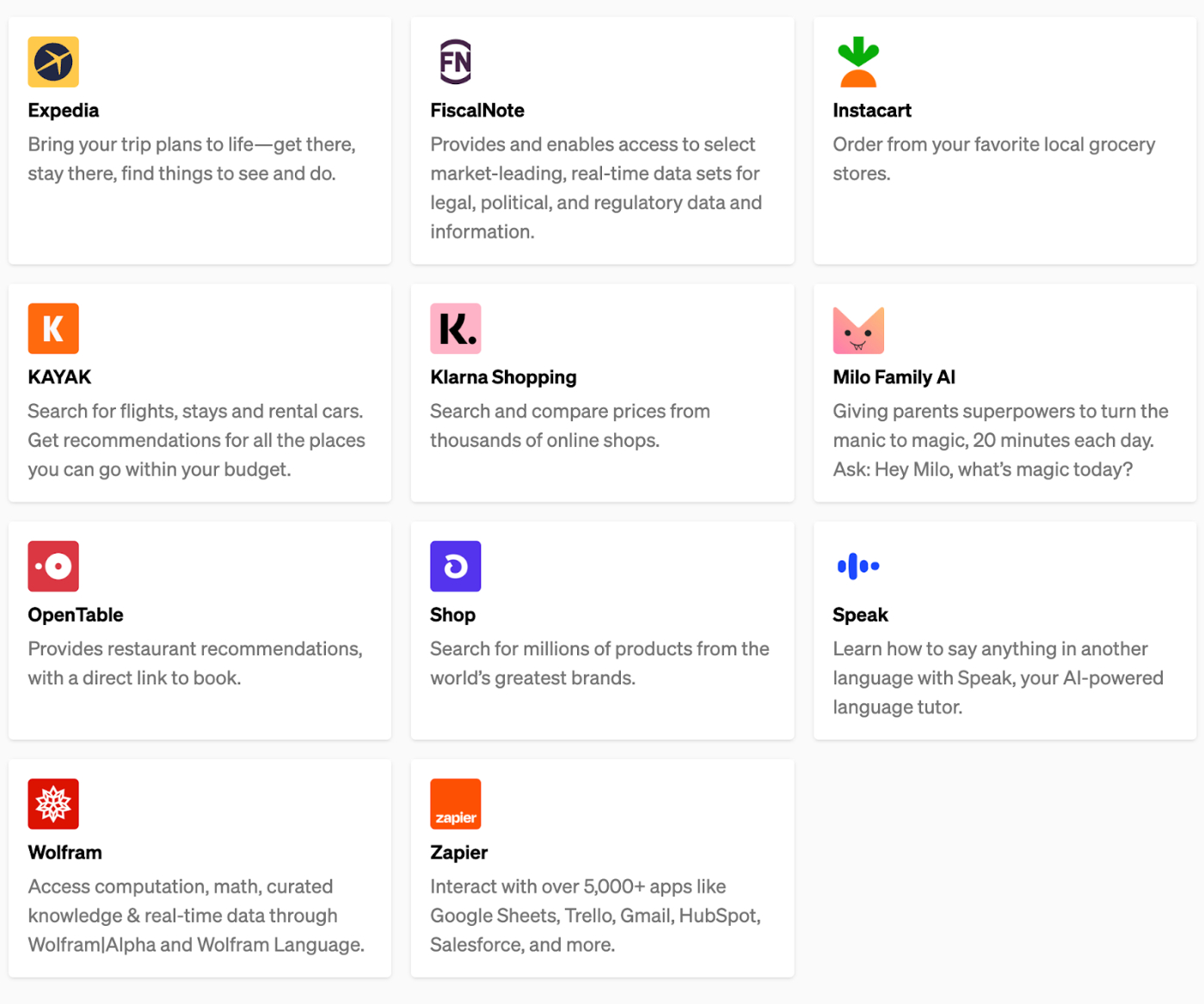

In addition to these three first-party plugins, OpenAI has partnered with 11 companies to build third-party plugins for ChatGPT. It seems like it wanted to demonstrate a broad variety of use cases. Here’s a screenshot from the announcement page with descriptions for each:

The Only Subscription

You Need to

Stay at the

Edge of AI

The essential toolkit for those shaping the future

"This might be the best value you

can get from an AI subscription."

- Jay S.

Join 100,000+ leaders, builders, and innovators

Email address

Already have an account? Sign in

What is included in a subscription?

Daily insights from AI pioneers + early access to powerful AI tools

Comments

Don't have an account? Sign up!

Will intelligent chatbots kill search/SEO? I still feel like people will search for product reviews and specific expertise, but what's stopping ChatGPT from doing that too?