With new and improved LLMs coming out almost constantly, it’s tempting to always reach for the latest iteration, the one that promises unprecedented performance—with a commensurate price tag. But in exhaustive testing of 12 leading AI models, Michael Taylor found a counterintuitive truth: When it comes to mimicking human behavior, you can't buy authenticity. For anyone building products, testing ideas, or conducting audience-based research with AI, this data-driven exploration will help you pick the model that will get more done while keeping costs down.—Kate Lee

Was this newsletter forwarded to you? Sign up to get it in your inbox.

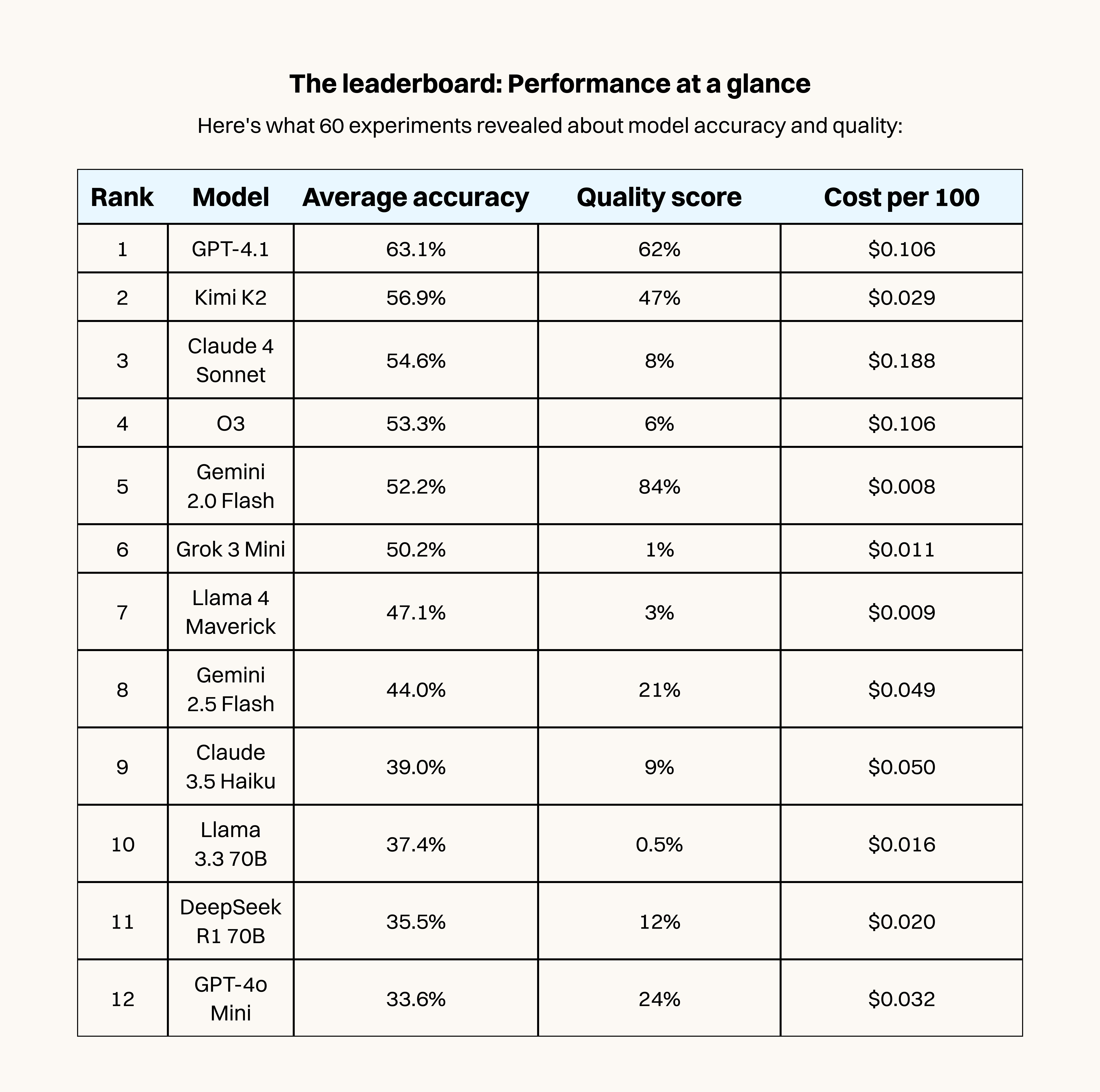

After running 60 experiments testing how well AI models can roleplay as real people, I discovered you can’t buy your way to quality. The model that sounds most believably human costs just $0.008 per 100 responses, while the most expensive option delivers robotic, overthought answers at 23 times the price.

At Ask Rally, we build audience simulators that use AI personas to model likely human behavior. As an alternative to traditional focus groups and surveys, you could get 80 percent of the insights at 1 percent of the cost. For early-stage work––from drafting a social media post to planning a product launch––it helps you gauge whether your ideas will resonate with a target audience before committing significant time or budget.

However, AI personas are only useful to the extent that the responses they give match up with what people would say if you ran a real-world study.

So I took five market research studies that had been done using groups of people and ran them using AI personas, then compared the results. I ran the same test across 12 leading models. The goal was simple—find out which LLM actually understands humans best, at an affordable price.

There were several surprises.

High accuracy does not equal quality

The first thing I learned was that there’s a difference between being accurate—making the same choices as humans did in real-world studies—and sounding human. Accuracy is probably going to be your biggest consideration in most cases. But I also wanted to capture how models were giving their answers, because AI personas have to sound like real humans to be believable. To do that, I used another LLM I had previously trained on a database of AI and human responses to recognize the difference. Then I told the LLM to read the AI personas’ outputs and tell me how human they sounded. I call this metric “quality.”

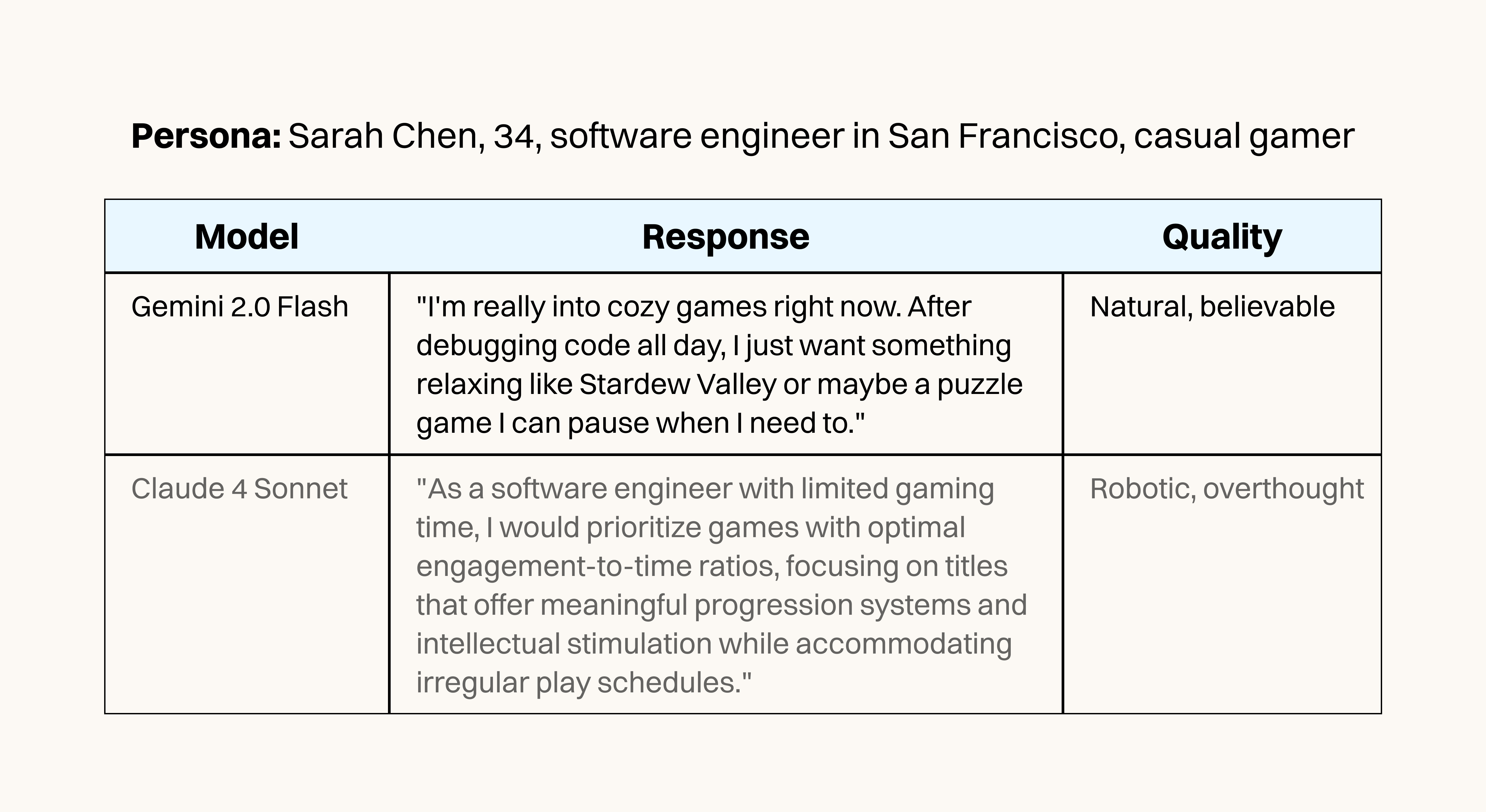

To give this some grounding, let’s look at one study in our test, in which we tried to replicate gamers’ preferences for various kinds of new products. We created an AI audience with the same characteristics as the human audience who participated in the study: U.S.-based mobile games players ages 25 to 54 (mostly 35-44), a 66:33 male-to-female ratio, interested in a wide selection of game categories. Given the same questions as appeared in the study, did the AI personas vote for the same answers as their human counterparts, and did their answers sound believably human? Here’s what one persona’s response looked like in two of the models we tested.

We ran the gamer preferences study and four others from a variety of domains, including politics, purchasing decisions, and B2B lead generation, using the same accuracy and quality metrics tested across each model.

In our study, Gemini 2.0 Flash achieved an 84 percent quality score despite 52.2 percent voting accuracy, but was incredibly cheap at just $0.08 per hundred responses. Meanwhile, Claude 4 Sonnet cost 23 times more ($0.188 per hundred) and did better on accuracy (54.6 percent), but scored just 8 percent on quality.

The Only Subscription

You Need to

Stay at the

Edge of AI

The essential toolkit for those shaping the future

"This might be the best value you

can get from an AI subscription."

- Jay S.

Join 100,000+ leaders, builders, and innovators

Email address

Already have an account? Sign in

What is included in a subscription?

Daily insights from AI pioneers + early access to powerful AI tools

Comments

Don't have an account? Sign up!

Great study! I'm really curious about the prompting methodology you used across the different models. Did you optimise the prompts for each model individually? I wonder if some of these quality differences might be down to which models respond better to your particular prompting style rather than them being inherently more human-like. I'm struggling myself to get decent, simple human-sounding answers!