Whenever a client wants to skirt the edges of copyright law, the usual response of copyright lawyers is: "Do you really want to find out?" That is...do you actually want to learn how much you'd owe in damages from copyright infringement?

I heard this a lot from lawyers when I ran a niche indie publisher. I suspect it's a refrain that AI companies are going to have to get used to as well. Many AI image-generators, like Midjourney, Stability AI, and DeviantArt’s DreamUp, were trained on copyrighted images.

In early 2023, the bill came due. Two parties—a group of artists (Andersen et al.) and Getty Images—separately sued AI image generators for copyright infringement. The lawsuits sparked discussion and debate among artists, engineers, VCs, AI companies, and the general public. I was surprised by how few people understood the nuances of copyright law.

I thought now would be a good time to write an explainer piece for the tech audience on the legal protections afforded to creative works. I’m not a lawyer, so don’t take anything here as legal opinion or advice. My knowledge is based on my personal experience, research, and consultations with experts.

Copyright: A (very) brief history, from pre-Gutenberg to post-Google

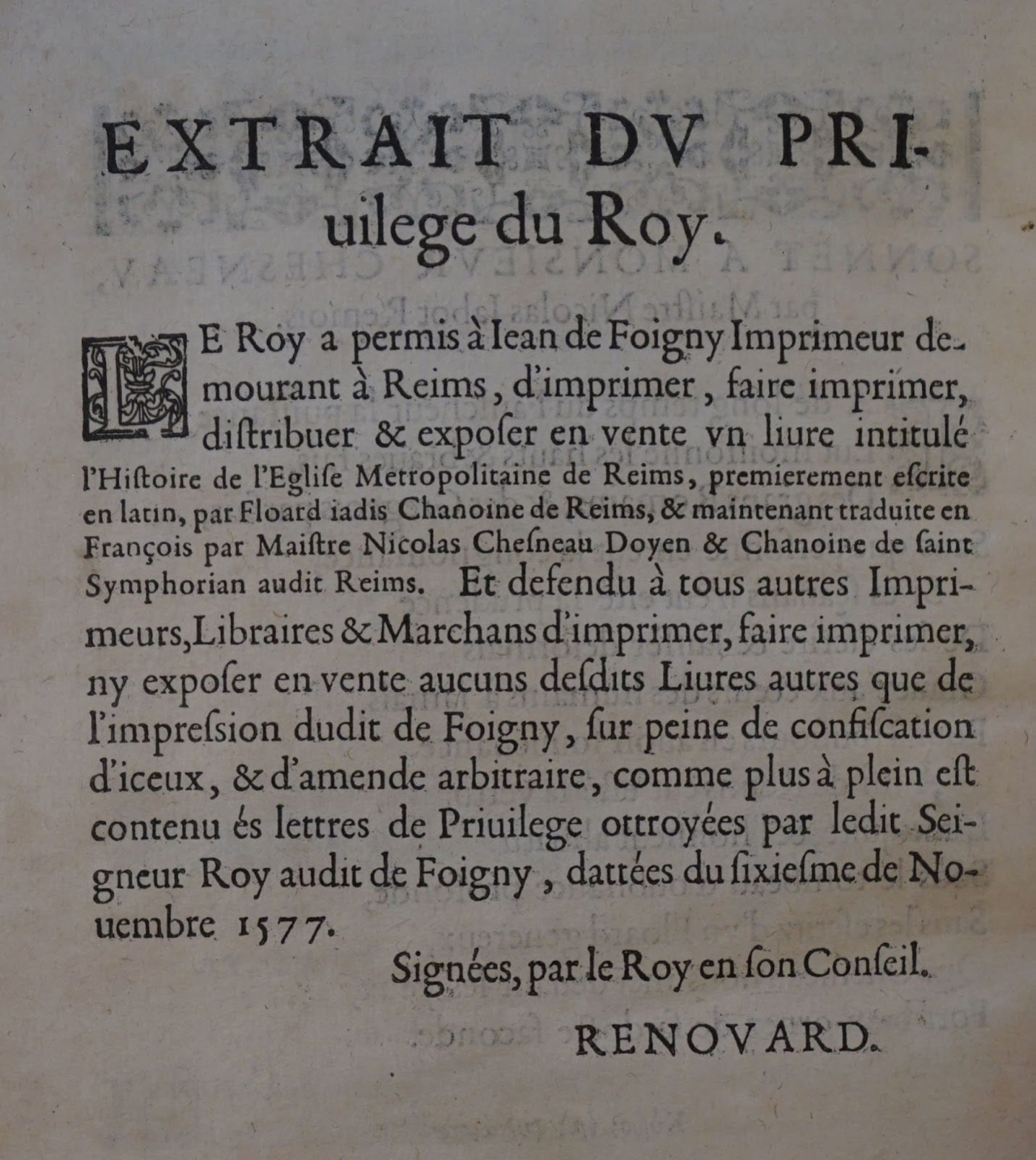

Copyright got its start in an unexpected place: royal courts. About 500 years ago, European monarchs began doling out privileges and licenses to their favorite artists. No one else but chosen creatives were allowed to publish and distribute works of art. In other words, the role of the writer/artist and publisher were often the same. These publishers’ exclusive rights to print and make copies came to be known as “copyright.”

A French royal printing permission from the 16th century granted publishing rights to a printing press in Reims.For a time, royally appointed publishers were the only ones who could afford printing presses. They adhered to and later enforced censorship standards because they were on the hook for what they printed. You published heresy or sedition? Jail time or the stakes for you.

Everything changed in the mid-1500s, after the rise of Gutenberg’s more affordable printing press. Suddenly, any priest or craftsman or gentle folk who got their hands on a press—by renting, buying, or partnering with an unlicensed publisher—could print whatever they liked. The roles of publisher and author started to diverge.

Pamphlets and flyers during the Gutenberg era were often anonymous. If writers were named, then often by informal agreements with their publishers, they could receive payments. Censor-publishers attempted to punish creators of unlicensed work, but they couldn’t keep up with printed seditions and heresies anymore.

Public opinion about royal copyright began to shift in the 1600s as English poet John Milton spoke out for the “liberty of unlicensed printing.” In what is now thought of as “the world’s first important essay in defense of freedom of expression,” Milton lobbied to free publishers from the tyranny of noble censorship.

Finally, the Copyright Act of 1710 passed in England. It was the first law to grant copyrights to authors. Ironically, it was a Hail Mary from the royal publishers who had failed to wrest back printing power from the masses. By giving authors an avenue for formal ownership, the publishing guild hoped to curtail unauthorized, unregulated publishing.

The birth of fair use in America

Because the 1710 English law didn’t apply to the American colonies, the U.S. had a late start in copyright. At James Madison’s suggestion, the Copyright Clause was written into the U.S. Constitution:

[The Congress shall have Power…] To promote the Progress of Science and useful Arts, by securing for limited Times to Authors and Inventors the exclusive Right to their respective Writings and Discoveries.

U.S. Constitution, Article I, Section 8, Clause 8, known as the Copyright Clause.

The 18th century had no TVs, movies, music recordings, photography, software, or the internet. So the rapid developments in technology and creative mediums resulted in 200+ years of legislation and litigation.

For better or worse, copyright evolved with the times. Early on, no one could reproduce work without the consent of the copyright owner, who would charge hefty licensing fees. That stifled public access to important information—like clips in TV news reports or educational content.

The Copyright Act of 1976 fixed that by introducing the concept of “fair use.” Some entities could use copyrighted materials for free under certain conditions. In lawsuits, courts would weigh many details to decide whether a reproduction was “fair use.” Here are a few examples (but by no means an exhaustive list):

- What entity copied the work

- The purpose of the copying (education, information, expression, etc.)

- How much of the original material was shared

- If the reproduction steals business from the original work

Initially, fair use was reserved for nonprofits and government entities. In recent years, courts started to grant allowance for commercial use if other factors were compelling.

Two cases, both featuring Google, highlighted that shift. In 2006, a Playboy competitor called Perfect 10 sued Google for indexing the magazine’s covers and displaying their thumbnails. In 2015, Authors Guild sued Google for scanning copyrighted library books and providing snippets of them in search results.

The Only Subscription

You Need to

Stay at the

Edge of AI

The essential toolkit for those shaping the future

"This might be the best value you

can get from an AI subscription."

- Jay S.

Join 100,000+ leaders, builders, and innovators

Email address

Already have an account? Sign in

What is included in a subscription?

Daily insights from AI pioneers + early access to powerful AI tools

Comments

Don't have an account? Sign up!

really good piece Helen and lots of this needs to be explored....have been teaching and writing about AI at Lehigh University for non-tech students since 2016, was selected to present to world journalism educators conference about how to do it in 2019 https://www2.lehigh.edu/news/rise-of-the-robots-coming-to-a-first-year-intro-to-journalism-class-near-you Dan has guest lectured in couple of my media entrepreneurship courses and if you ever want to talk about the issues we are thinking about see next comment. give me a holler. best Craig Gordon

Helen here are the issues I will be talking about with Lehigh alumni in our micro course in April:

1.Generative artificial Intelligence will give way to Transformative Artificial Intelligence within the next 5 years.

2. Another Artificial Intelligence winter is looming because we don’t have the tools yet to create the Consciousness part of Intelligence.

3. Leaving most development of Artificial Intelligence to corporations whose main purpose is pursuit of profit, leaves not enough resources to develop the UpperCase creative concepts needed

4. The best way to participate in new development products and services of Artificial Intelligence will be to use mathematical concepts such as Algebra, Geometry and Calculus with text, images and soon videos instead of numbers.

5. Anyone wanting to to become part of the Artificial Intelligence industry or discussion must first understand and know a definition of what Human Intelligence is.

Thanks for this detailed and thoughtful post. In addition to the question of copyrighted works as inputs to AI, will you be writing about how copyright law and patent law will affect the outputs of AI? This brief seems to indicate that AI outputs cannot be neither copyrighted nor patented:

https://www.polsinelli.com/publications/chatbots-select-legal-considerations-for-businesses

This seems like a big stumbling block for a lot of potential uses.