Creative Work Is About to Look a Lot More Like Programming

Flora's Weber Wong on why creative professionals need to stop thinking in artifacts and start thinking in systems

March 5, 2026 · Updated May 22, 2026

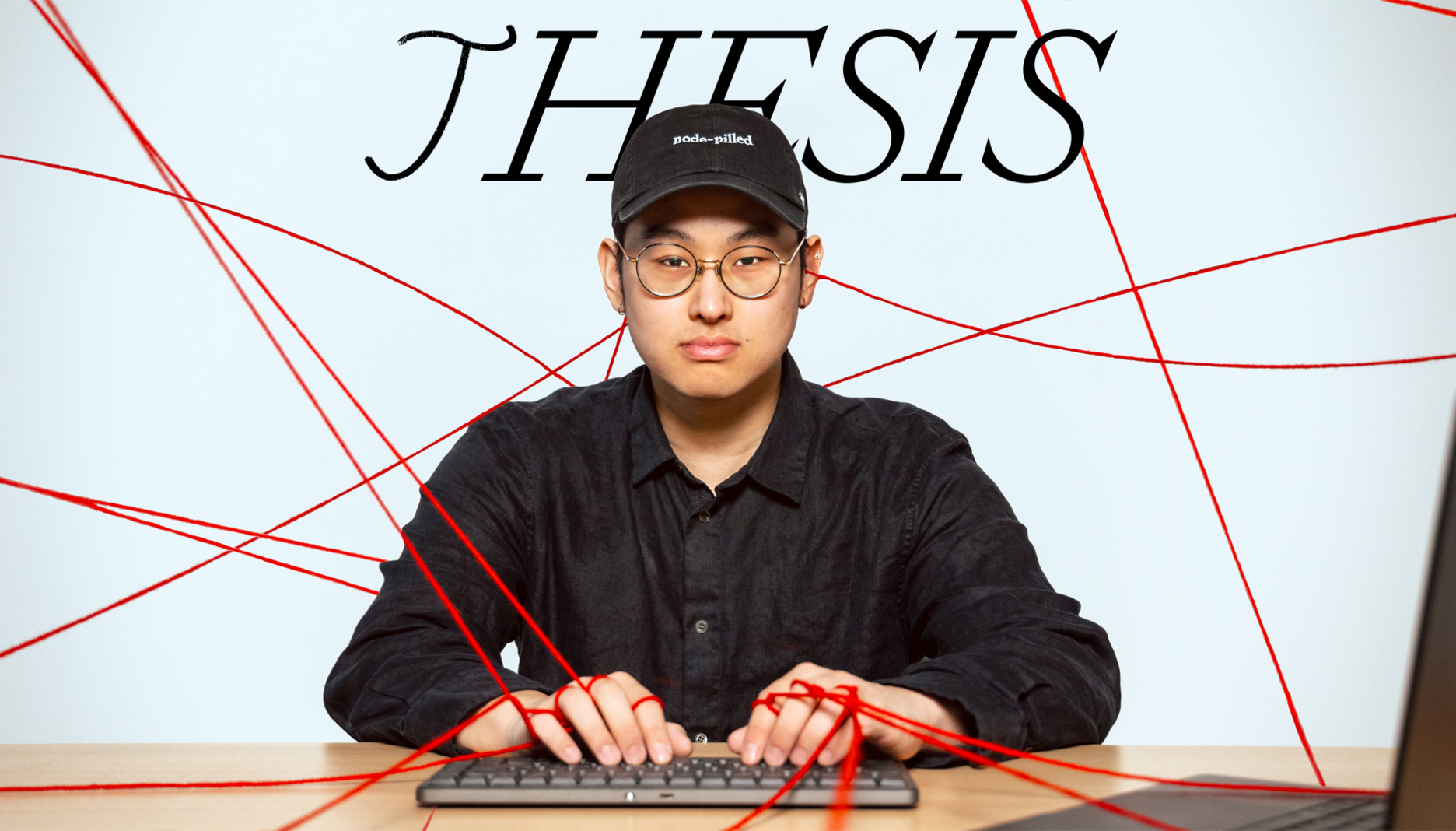

TL;DR: One of Every’s 2026 predictions was for AI to finally disrupt work for creative professionals. Weber Wong, the founder of AI design tool Flora and a former venture capitalist, thinks that moment is here, but that most are still missing where the real shift is happening. Rather than being about better prompts, creative professionals should be moving from creating one-off outputs to reusable workflows. Read on for his framework, including a side-by-side breakdown of the prompt approach versus the workflow approach, and four principles for thriving as visual programming becomes the new foundation of creative work.—Kate Lee

Was this newsletter forwarded to you? Sign up to get it in your inbox.

As an artist and designer, I’ve used every creative tool in the modern suite: Photoshop, Figma, Midjourney, Sora. Whether I was clicking through menus or typing prompts while creating interactive AI installations, I was doing the same thing in each one: producing one output at a time, with no system underneath. One image had to be moved to another app to make another change or manipulate it in another way. I was working like an assembly line worker, clicking the same buttons over and over in the same sequence, when I wanted to be working like an architect.

I took an unusual path to figuring this out. I went from venture capital to New York University’s Interactive Telecommunications Program, the same graduate arts program that helped launch one of my favorite AI startups, Runway. There, I spent more time operating tools than thinking about artistic ideas. Even with generative AI, which allowed me to produce a piece of media in seconds, every project still started from scratch. I couldn’t save my process, share it with a collaborator, or build on what I’d learned yesterday.

That realization led me to build Flora, a platform where creative professionals build generative workflows using all the best text, image, and video models on one infinite canvas. Since we launched in February 2025, millions of professionals from companies like Pentagram and Netflix have used it. We’ve raised $42 million to become the default AI-powered system for creative professionals. But what I’m about to share applies whether you use Flora, a competitor, or tools that don’t exist yet.

Model capabilities are advancing faster than anyone predicted. We’ve added support for Nano Banana, Veo 3.1, Sora 2, and dozens of other specialized models. In this environment, your competitive advantage as a creative person won’t be access to the best model because everyone will have that. Instead, you need to know how to orchestrate models into workflows that deliver consistent value.

Welcome to the world of visual programming.

The real problem: Visual creative work doesn’t scale

Most creative professionals I know, and many on Flora’s team, have suffered the pain of traditional creative tools. You develop a familiarity and dexterity with them. You remember where you’re dragging a mask across an image to hide the subject from the background, tapping shortcuts without thinking, or nudging curves a pixel at a time to get the shape just right.

But tomorrow, you open a new file and start from zero. Your expertise lives in your muscle memory, and you can’t transfer those skills to someone else.

I call this mental model artifact thinking: creative work that produces discrete outputs, one at a time, each beginning from scratch. Traditional tools like Photoshop and Illustrator, which demand endless hand-tuned adjustments and manual refinements to produce a single polished image, trap you in this way of working.

Midjourney and DALL-E feel like liberation because they generate outputs so quickly, and you can communicate with them in the same language you speak every day. But visual prompts, too, are one-time, disposable things. You can’t hand them to a colleague and be confident you will get the same result. The magic of near-instantaneous generation masks the fact that you are still in artifact thinking.

Design has escaped this kind of linear mental model before. Consider what happened with user interface design. If you were designing an app a decade ago, you’d manually create every screen—the login screen, the home screen, the settings page, the error states—as a separate image. Updating a button color required changing each of these images.

Figma blew this apart by introducing components: The same button was designed once and then recreated across a project. With auto-layout, elements could reflow intelligently when content changed. The software allowed designers to stop making screens and start making systems that generated screens.

The Only Subscription

You Need to

Stay at the

Edge of AI

The essential toolkit for those shaping the future

"This might be the best value you

can get from an AI subscription."

- Jay S.

Join 100,000+ leaders, builders, and innovators

Email address

Already have an account? Sign in

What is included in a subscription?

Daily insights from AI pioneers + early access to powerful AI tools

.png)

.png)

Comments

Don't have an account? Sign up!